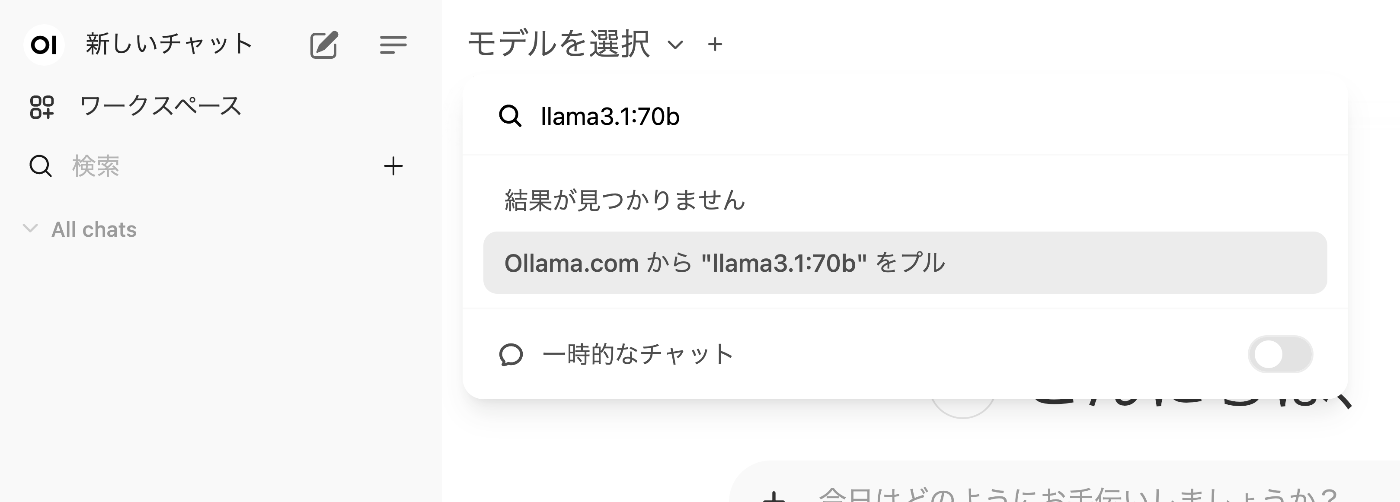

🧪Running LLM-jp-4 Locally on a MacBook Pro M4 Max 128GB with Ollama’s OpenAI-Compatible API

Notes and measurements from running LLM-jp-4 8B locally on a MacBook Pro M4 Max 128GB and exposing it through Ollama’s OpenAI-compatible API

aillmmacollama

Notes and measurements from running LLM-jp-4 8B locally on a MacBook Pro M4 Max 128GB and exposing it through Ollama’s OpenAI-compatible API

Notes on LLM-Related Tools

Running a Local LLM Using mdx.jp 1GPU Pack and Ollama