🤖Exposing vLLM on mdx.jp Through Cloudflare Tunnel as an OpenAI-Compatible API

How I exposed a vLLM server running on mdx.jp through Cloudflare Tunnel and used it as an OpenAI-compatible API, including the practical pitfalls

How I exposed a vLLM server running on mdx.jp through Cloudflare Tunnel and used it as an OpenAI-compatible API, including the practical pitfalls

Notes from running the official LLM-jp-4-32b-a3b-thinking model on an mdx.jp A100 40GB x2 server and switching from a Transformers OOM to a vLLM deployment

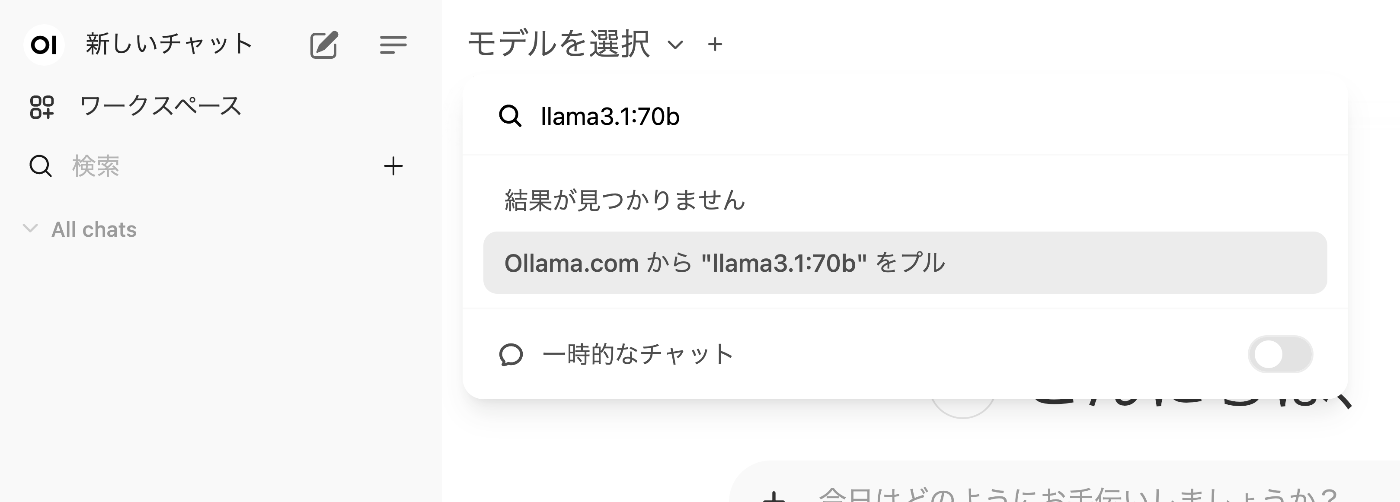

Notes and measurements from running LLM-jp-4 8B locally on a MacBook Pro M4 Max 128GB and exposing it through Ollama’s OpenAI-compatible API

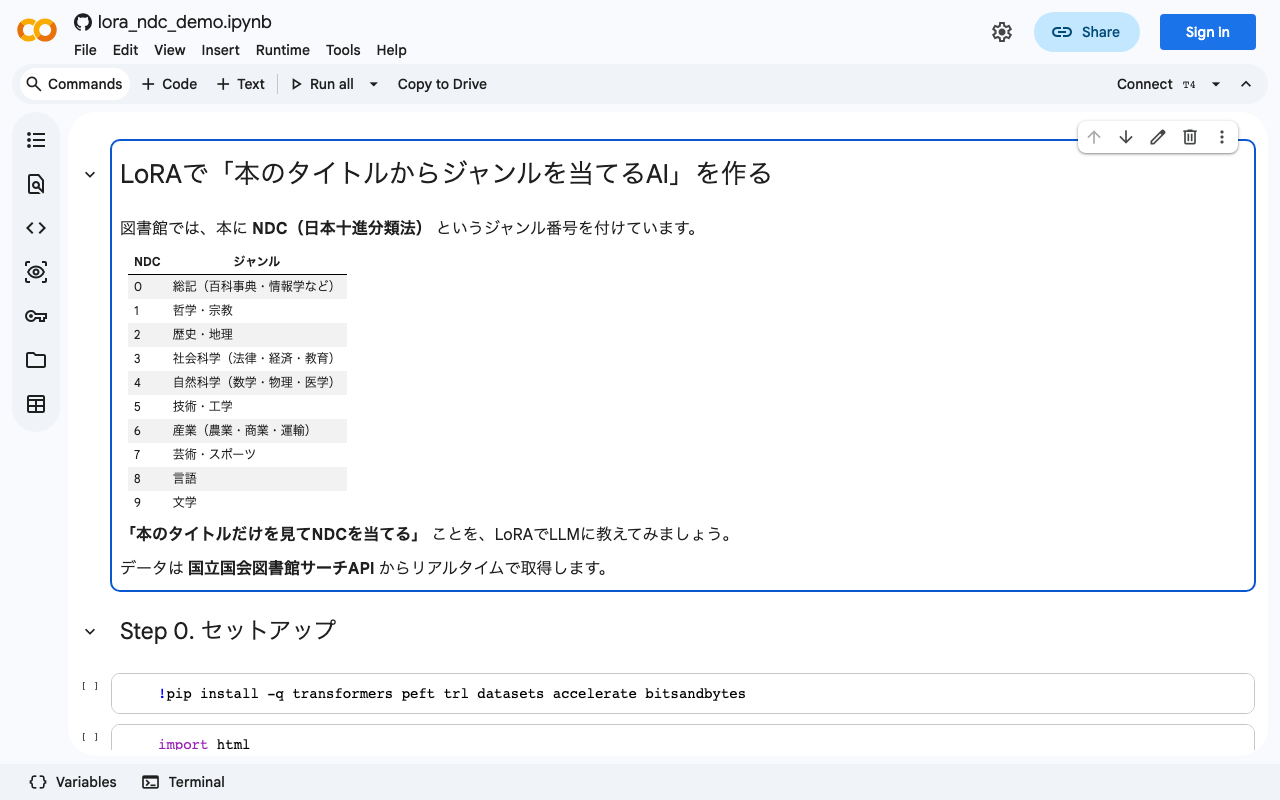

A hands-on tutorial on collecting bibliographic data from the National Diet Library Search API and fine-tuning a 1.8B Japanese LLM with LoRA to classify books by their NDC (Nippon Decimal Classification) category from title alone.

Azure OpenAI GPT-4 vs Document Intelligence: Comparative Evaluation of Japanese Vertical Text OCR

LLM-Based Manuscript Paper OCR Performance Comparison: Verification of Vertical Japanese Recognition Accuracy

Notes on LLM-Related Tools

Running a Local LLM Using mdx.jp 1GPU Pack and Ollama