Converting Word to TEI/XML

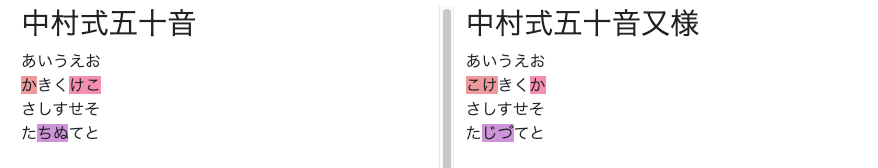

Overview I had an opportunity to convert Word files to TEI/XML files. Upon investigation, in addition to official TEI tools such as TEIGarage Conversion, I found a conversion example in TEI Publisher: https://teipublisher.com/exist/apps/tei-publisher/test/test.docx.xml The above example appeared to convert Word style information into TEI tags, so I tried this approach. For this project, I used the python-docx library with the goal of using it independently of TEI Publisher. Word File I created a prototype Word file like the one below. All styles are provisional, but I created styles such as “tei:persName” and “tei:warichu” and changed their visual styling such as color. The mechanism works by applying styles to perform simple structuring. ...

![[Google Colab] Retrieving Article Lists Using the Hatena Blog AtomPub API](/images/articles/fa6dc7d313ebe7.png)