How to Upload Media to Omeka S Using Python

Overview This is a personal note on how to upload media to Omeka S using Python.

Preparation Prepare environment variables.

OMEKA_S_BASE_URL=https://dev.omeka.org/omeka-s-sandbox # Example OMEKA_S_KEY_IDENTITY= OMEKA_S_KEY_CREDENTIAL= Initialize.

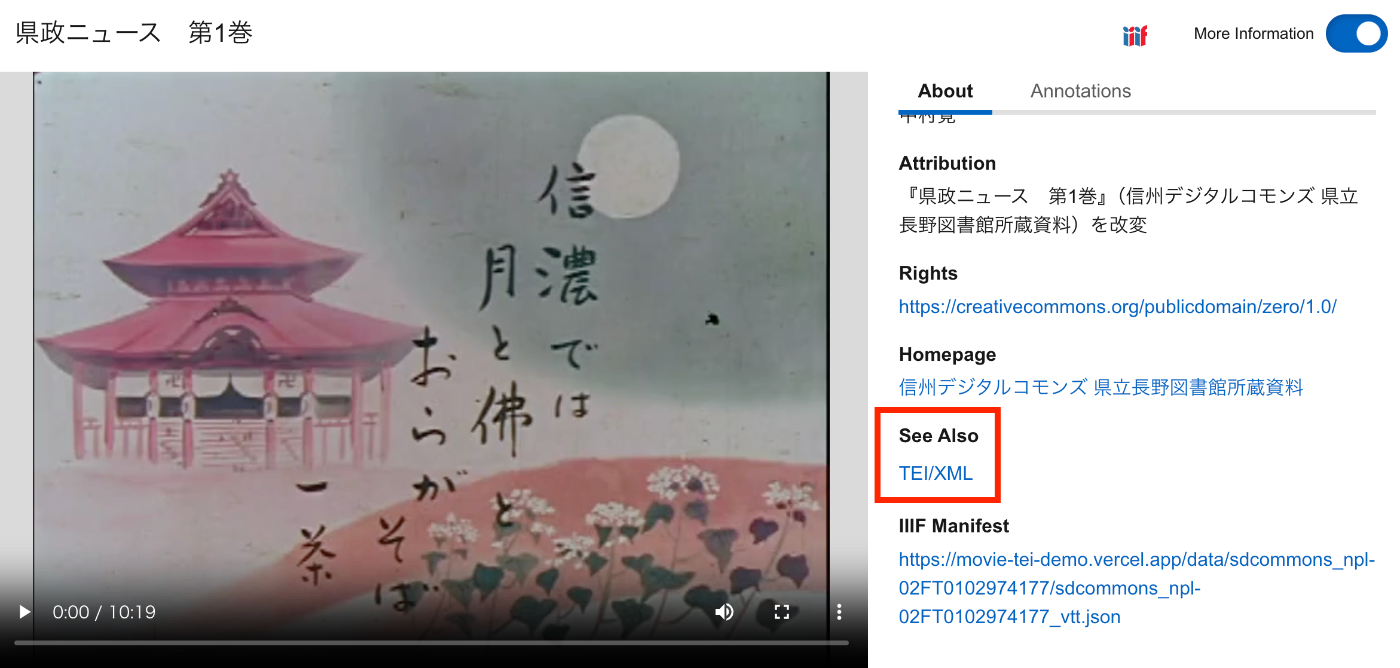

import requests from dotenv import load_dotenv import os def __init__(self): load_dotenv(verbose=True, override=True) OMEKA_S_BASE_URL = os.environ.get("OMEKA_S_BASE_URL") self.omeka_s_base_url = OMEKA_S_BASE_URL self.items_url = f"{OMEKA_S_BASE_URL}/api/items" self.media_url = f"{OMEKA_S_BASE_URL}/api/media" self.params = { "key_identity": os.environ.get("OMEKA_S_KEY_IDENTITY"), "key_credential": os.environ.get("OMEKA_S_KEY_CREDENTIAL") } Uploading a Local File def upload_media(self, path, item_id): files = {} payload = {} file_data = { 'o:ingester': 'upload', 'file_index': '0', 'o:source': path.name, 'o:item': {'o:id': item_id} } payload.update(file_data) params = self.params files = [ ('data', (None, json.dumps(payload), 'application/json')), ('file[0]', (path.name, open(path, 'rb'), 'image')) ] media_response = requests.post( self.media_url, params=params, files=files ) # Check the response if media_response.status_code == 200: return media_response.json()["o:id"] else: return None Uploading a IIIF Image Specify a IIIF image URL like the following to register it.

...

January 3, 2025 · Updated: January 3, 2025 · 1 min · Nakamura