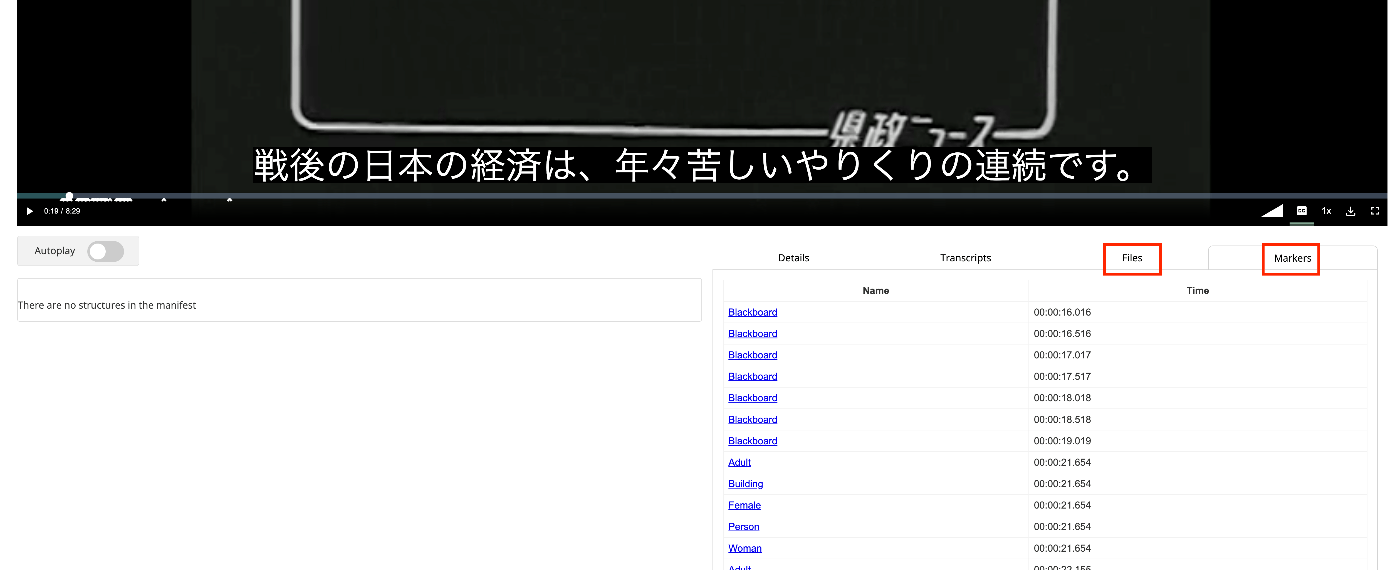

How to Use the Files/Markers Tabs in the @samvera/ramp Viewer

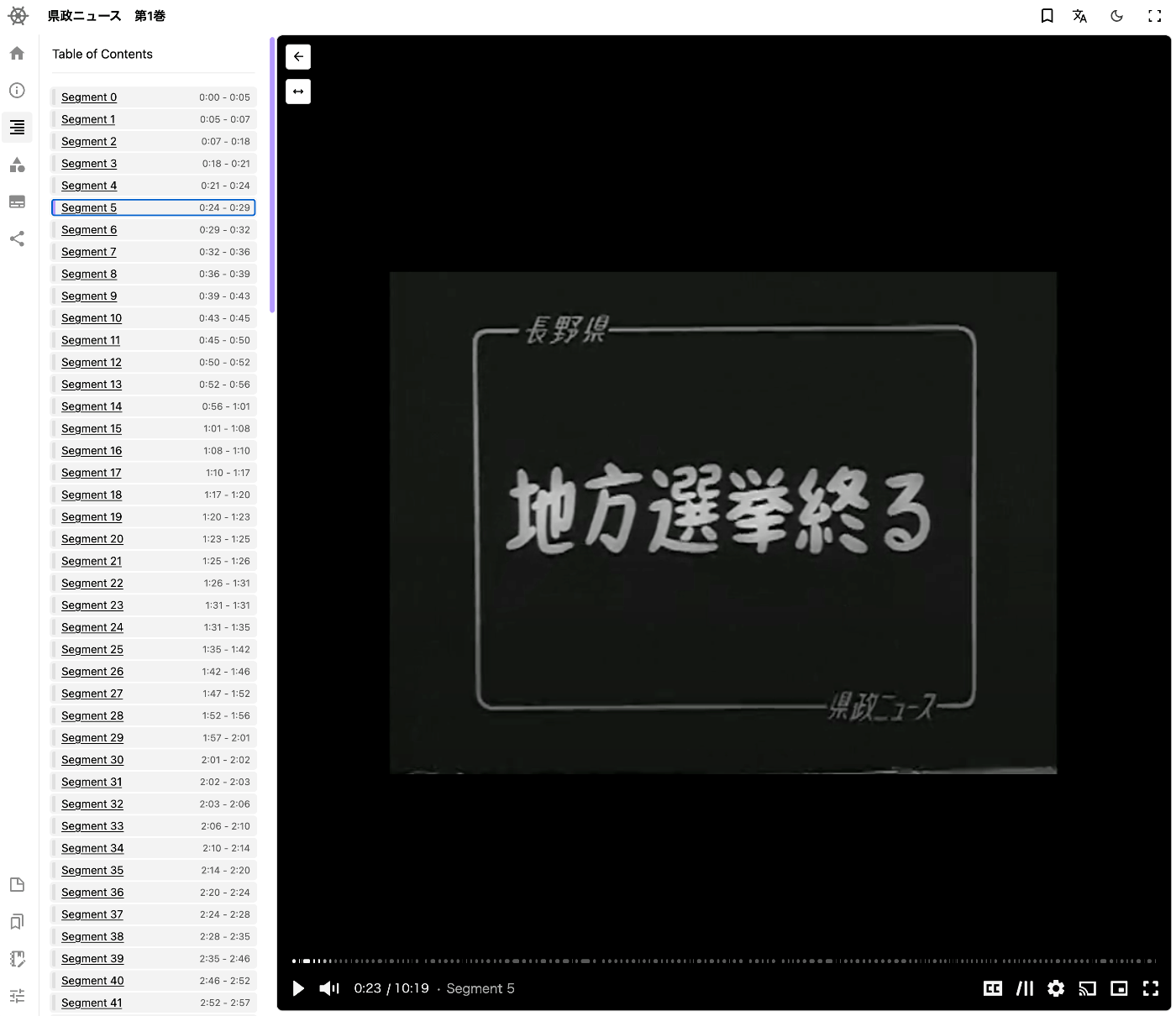

Overview I looked into how to use the Files/Markers tabs of the @samvera/ramp viewer, one of the viewers compatible with IIIF Audio/Visual, so this is a personal note for future reference. Documentation For Files, documentation was found at the following. https://samvera-labs.github.io/ramp/#supplementalfiles For Markers, documentation is found at the following. https://samvera-labs.github.io/ramp/#markersdisplay Data Used “Kensei News Volume 1” (Nagano Prefectural Library) is used. https://www.ro-da.jp/shinshu-dcommons/library/02FT0102974177 Files Tab It is documented that it reads the rendering property. The rendering property is also featured in the following Cookbook. ...