Creating Apps with Azure OpenAI Assistants API Using Gradio and Next.js

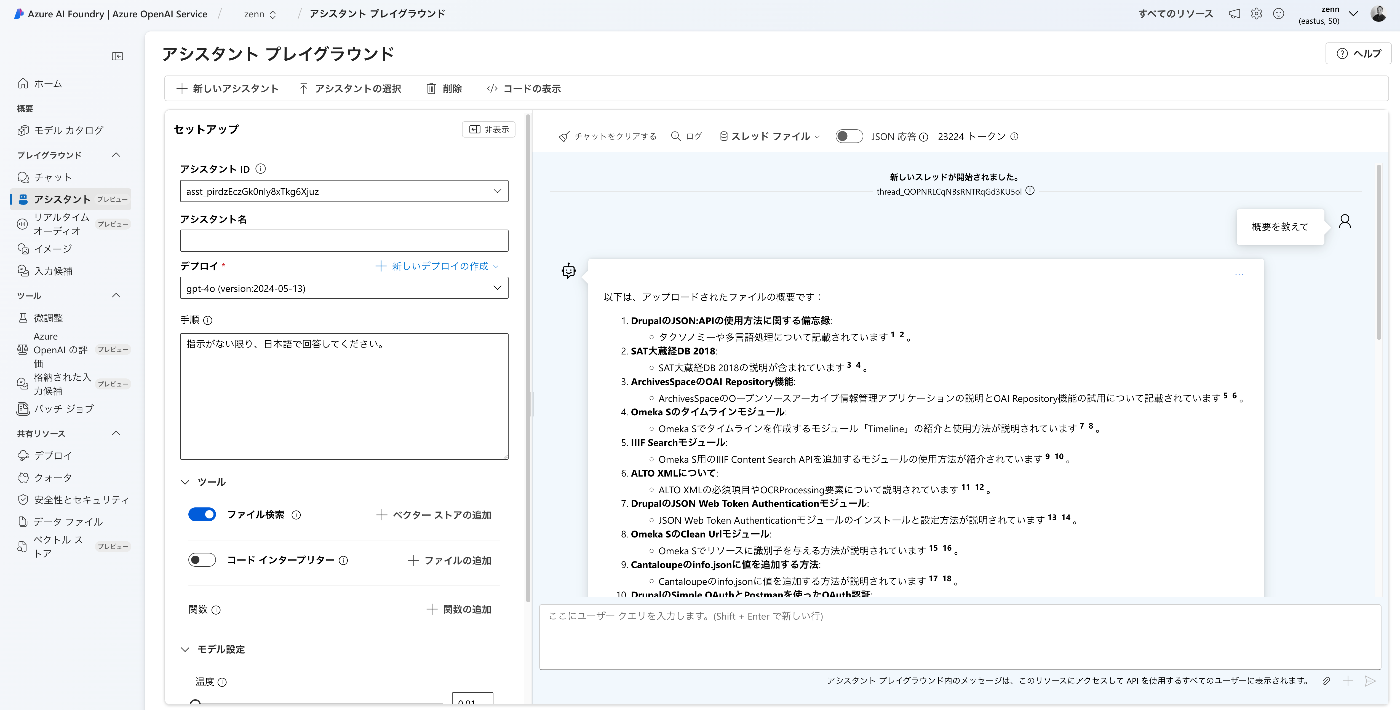

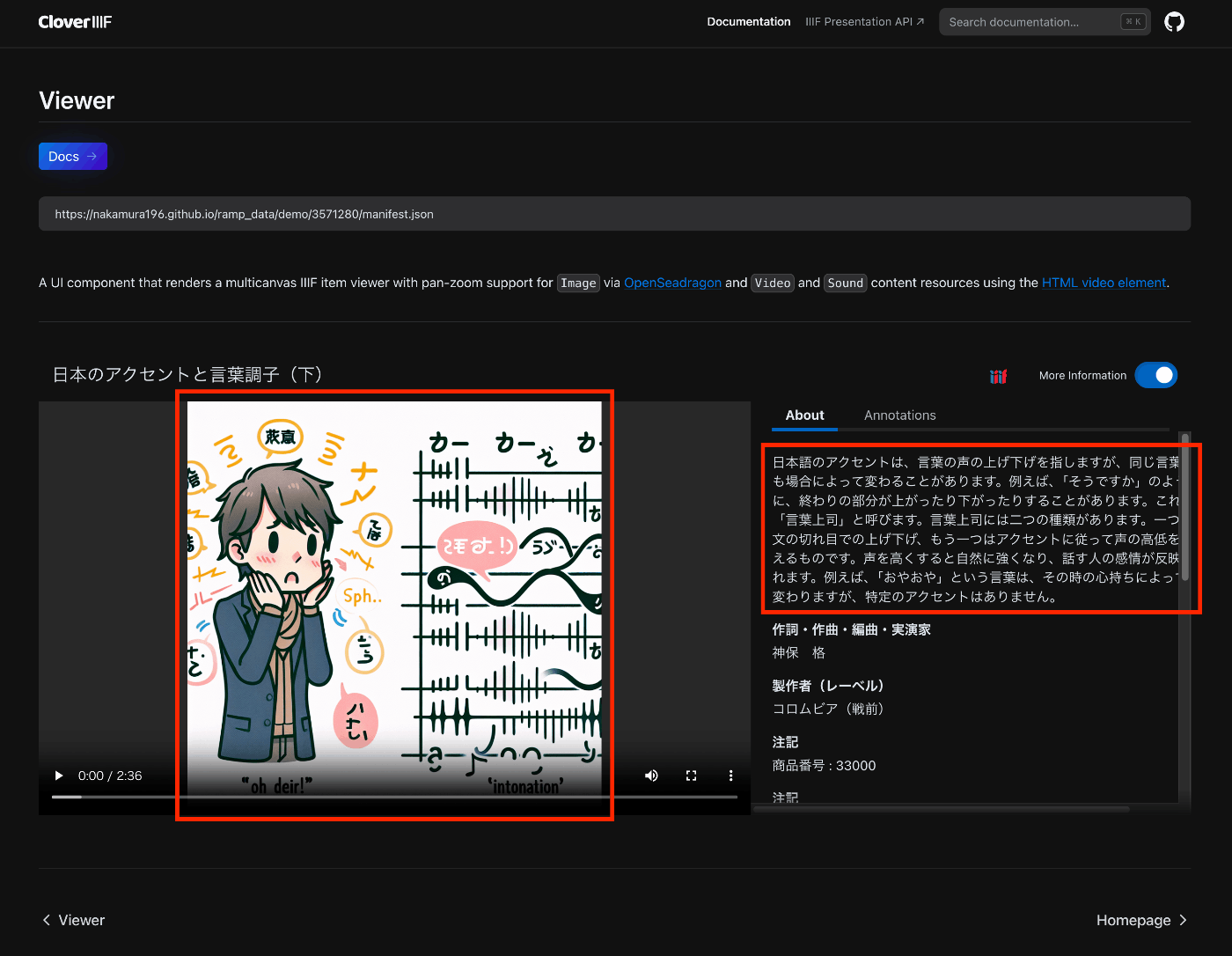

Overview I created apps using the Azure OpenAI Assistants API with Gradio and Next.js, so here are my notes. Target Data I used articles published on Zenn as the target data. First, I bulk downloaded them with the following code. import requests from bs4 import BeautifulSoup import os from tqdm import tqdm page = 1 urls = [] while 1: url = f"https://zenn.dev/api/articles?username=nakamura196&page={page}" response = requests.get(url) data = response.json() articles = data['articles'] if len(articles) == 0: break for article in articles: urls.append("https://zenn.dev" + article['path']) page += 1 for url in tqdm(urls): text_opath = f"data/text/{url.split('/')[-1]}.txt" if os.path.exists(text_opath): continue response = requests.get(url) soup = BeautifulSoup(response.text, "html.parser") html = soup.find(class_="znc") txt = html.get_text() os.makedirs(os.path.dirname(text_opath), exist_ok=True) with open(text_opath, "w") as f: f.write(txt) Registering to the Vector Store Upload data files with the following code. ...