Created Version 2 of the NDLOCR App Using Google Colab

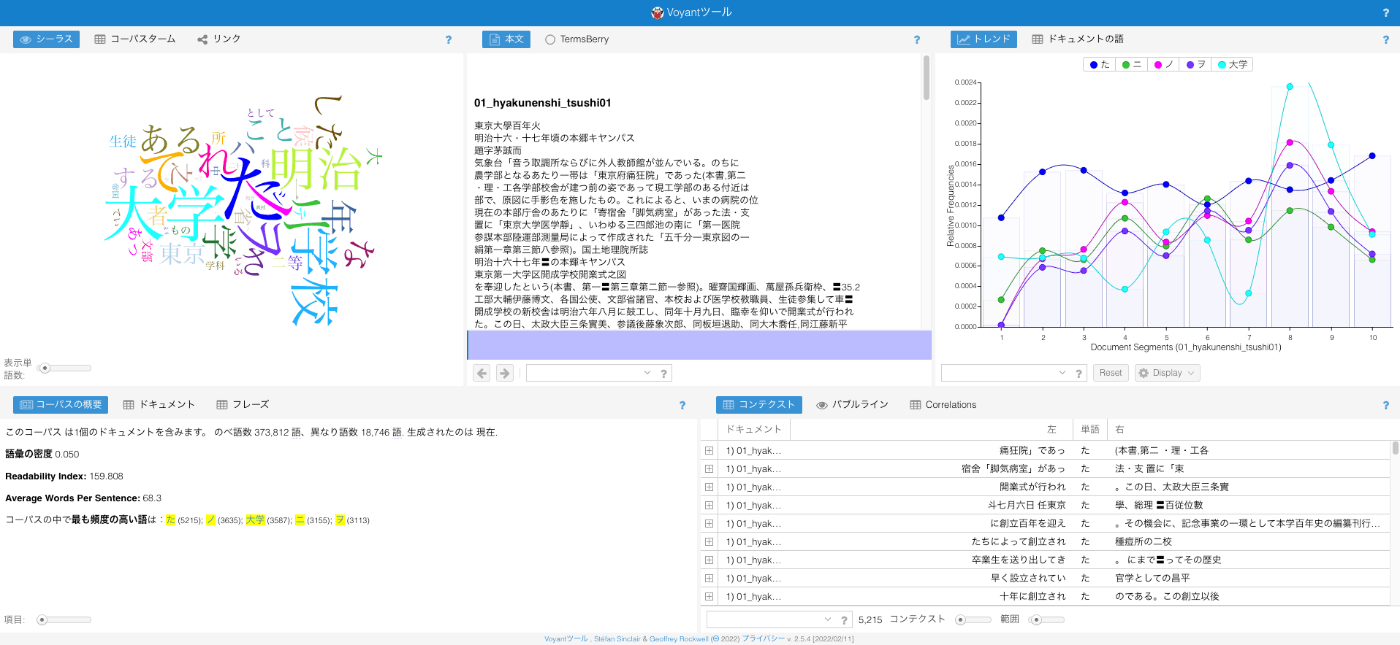

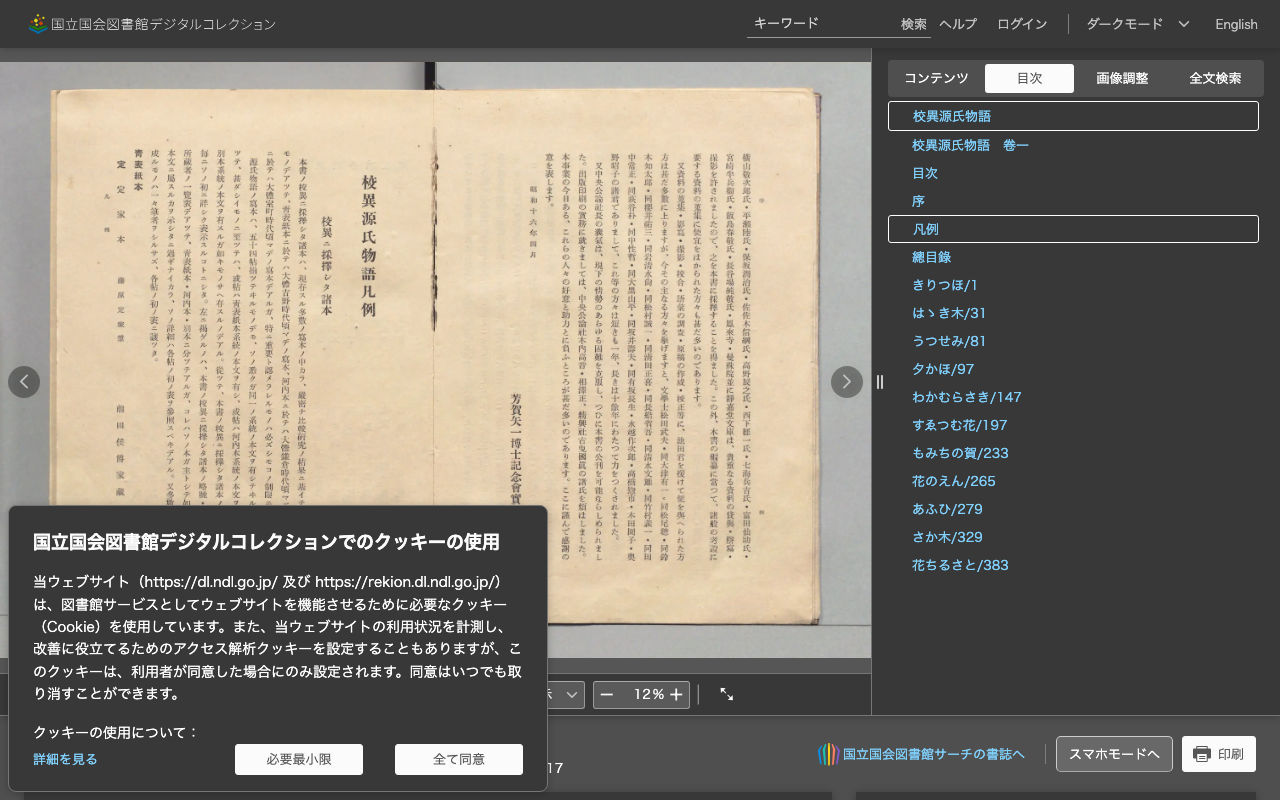

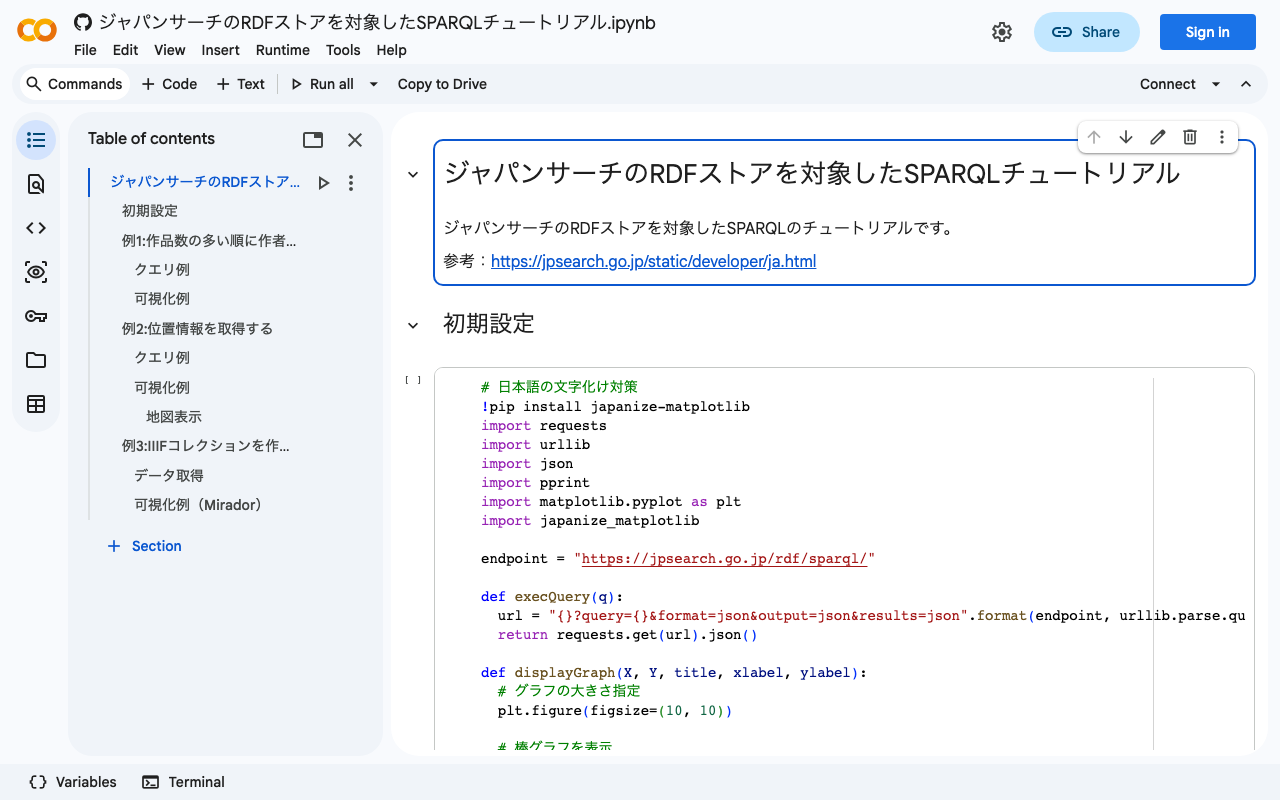

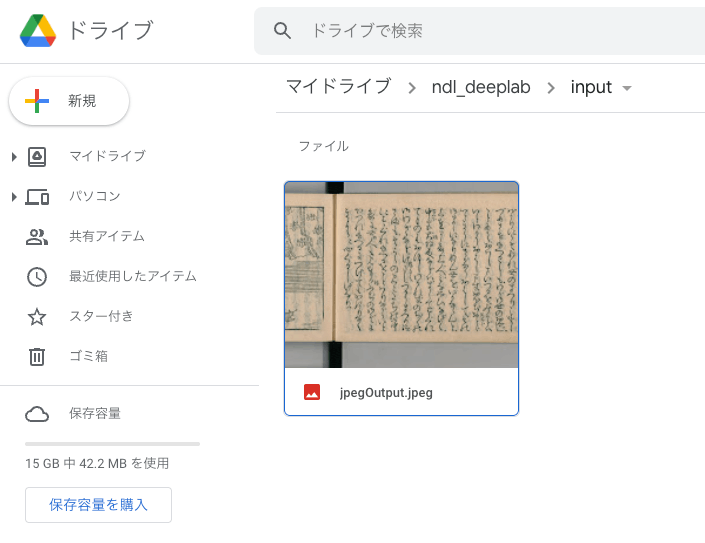

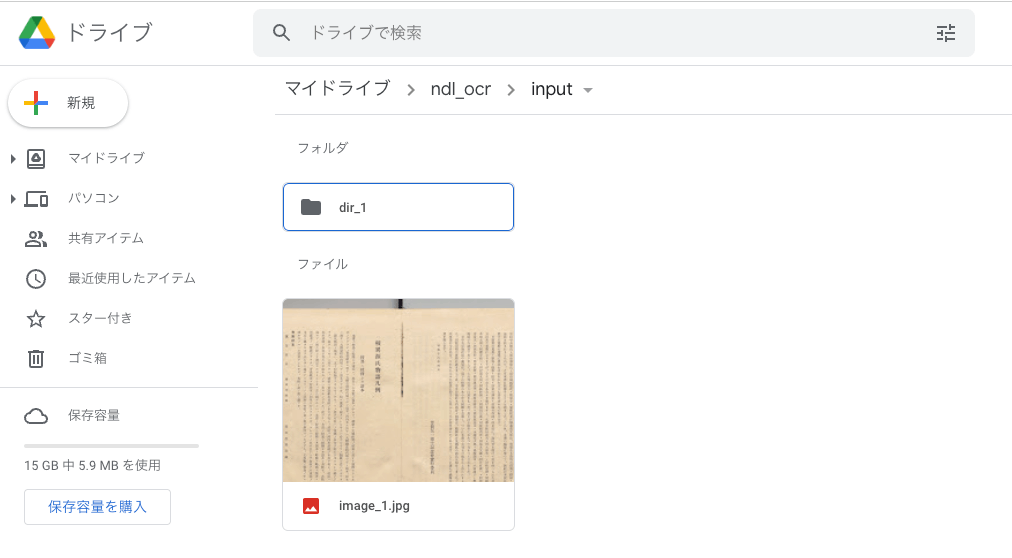

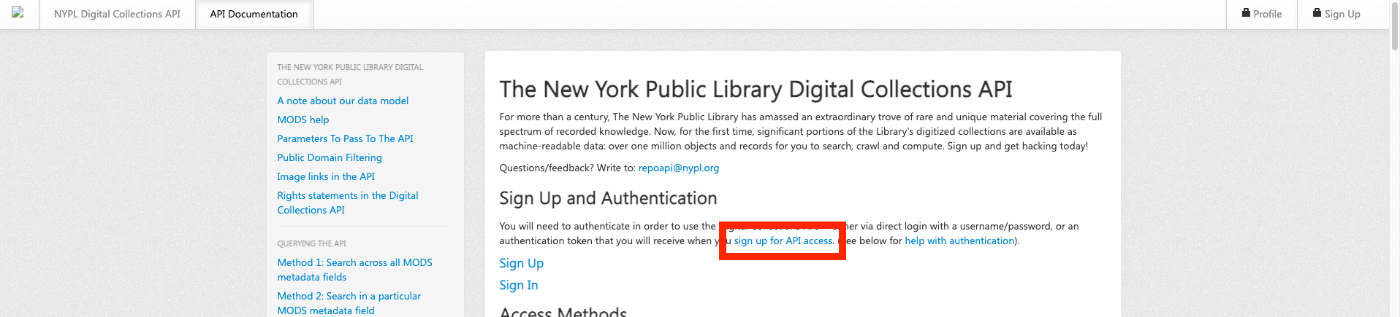

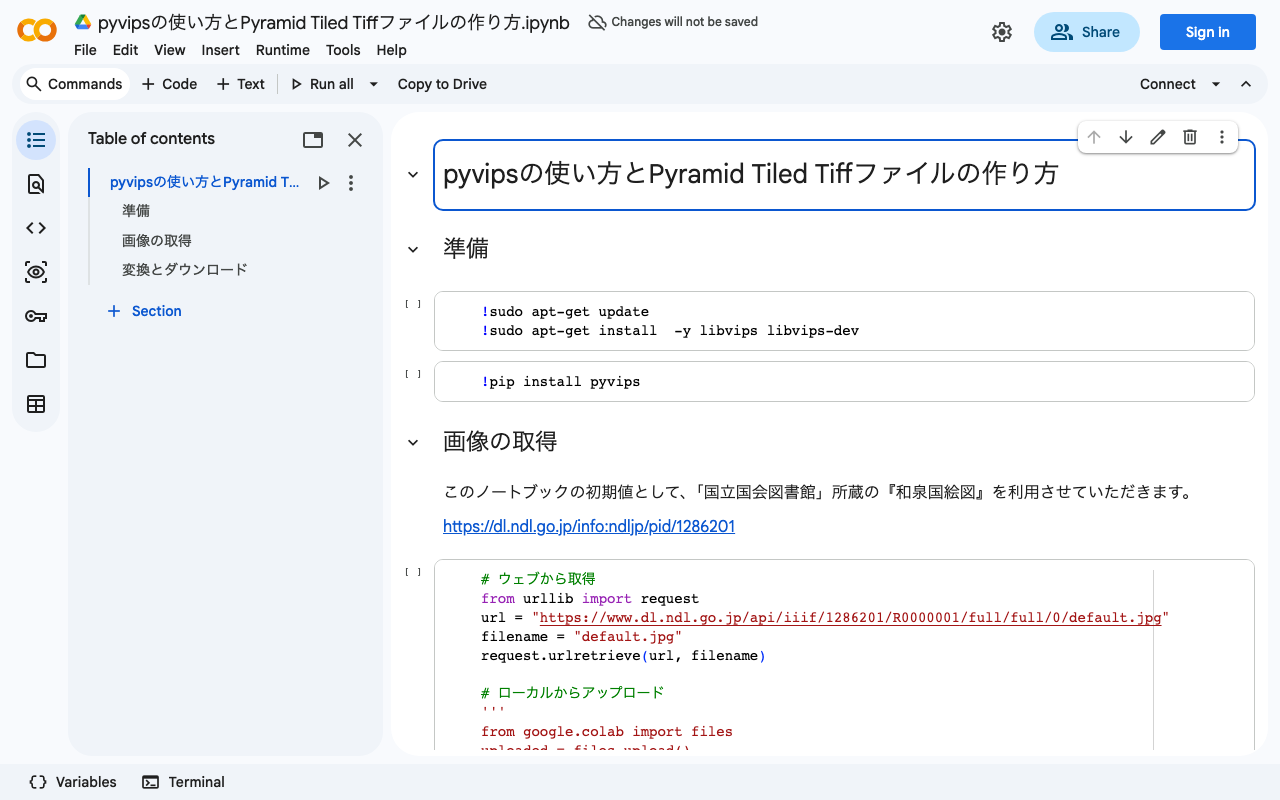

Announcements Notebook URL https://colab.research.google.com/github/nakamura196/ndl_ocr/blob/main/ndl_ocr_v2.ipynb 2022-07-06 A demo video showing how to use it has been created. https://youtu.be/46p7ZZSul0o Additionally, a ruby (furigana) text conversion feature has been added. Overview I created an NDLOCR app using Google Colab and introduced it in the following article. This time, I created Version 2, an improved version of the above notebook. You can access the notebook from the following link. https://colab.research.google.com/github/nakamura196/ndl_ocr/blob/main/ndl_ocr_v2.ipynb Features Support for multiple input formats has been added. The following options are available: ...

![[Google Colab] Retrieving Article Lists Using the Hatena Blog AtomPub API](/images/articles/fa6dc7d313ebe7.png)

![[Memo] Created a Program for Batch Deleting Folders on Google Drive](https://cdn-ak.f.st-hatena.com/images/fotolife/n/nakamura196/20220309/20220309072742.png)