This article is co-authored with a generative AI. Facts have been cross-checked against official documentation where possible, but errors may remain. Please verify against primary sources before making any important decisions.

We ran two OCR engines on fascicles 571–575 of the Mahāprajñāpāramitā Sūtra (大般若波羅蜜多經) in the Jiaxing Tripitaka (嘉興版大蔵経) held by Yūrenja (酉蓮社, formerly the Hōonzō repository of Zōjōji), accessing the resource as 105 IIIF images via the Yūrenja Catalog Database hosted on Toyo Bunko’s research-project IIIF infrastructure.

- NDL Koten OCR-Lite, released by Japan’s National Diet Library

- Google Cloud’s Cloud Vision API (

DOCUMENT_TEXT_DETECTION)

This comparison was prompted by an expert who pointed out that Cloud Vision API can be the stronger choice over NDL Koten OCR in some situations. To check whether the same pattern holds on this particular resource, I tabulated outputs across all 105 images. On some metrics Vision was indeed closer to the reference text; on others NDL came out ahead. The two engines have different strengths and weaknesses rather than a single ordering.

TL;DR

- For this resource, both engines extracted comparable amounts of body text (NDL: 39,768 chars; Vision: 38,740 chars after noise filtering).

- NDL Koten OCR-Lite generated lines of text not actually present in the image on 12 of 105 pages — for example, lines like “いの”, “あま”, or “一月にて琉球人の神香物語り…” that mix hiragana/katakana into what is otherwise pure literary Chinese.

- Cloud Vision API picked up color charts / centimeter rulers / shelf labels / red seals embedded in the IIIF capture as if they were body text, on all 105 pages.

- For glyphs, the NDL XML used in this article had already been processed with a character-normalization (substitution-list) pass for proofreading, and is unified to traditional/Kangxi forms (爲, 曰, 淸, etc.). Vision output, by contrast, leans toward modern Japanese / simplified-Chinese forms (為, 日, 清, etc.). Note that this comparison is not raw NDL OCR vs Vision but post-processed final data vs Vision (see Observation 3).

- For complex multi-column layouts at the end of each fascicle (the onshaku 音釋 phonetic glosses, where a head character is annotated with bichrome small-character interlinear notes — warigaki 割書), NDL extracted far more lines than Vision (102 vs. 49 on the same image).

- Jaccard character-set overlap with the reference text after noise filtering was slightly higher for Vision (0.704 vs. 0.674).

The Resource

The target was fascicles 571–575 of the Mahāprajñāpāramitā Sūtra in the Jiaxing Tripitaka held at Yūrenja (formerly Zōjōji’s Hōonzō). For full bibliographic details, see the Yūrenja Catalog Database. The database and the IIIF distribution used here are research outputs of JSPS KAKENHI grants 18K00073 / 21H04345 / 25H00464.

- IIIF manifest:

https://u-renja.toyobunko-lab.jp/api/iiif/2/015-03/manifest - Number of canvases (images): 105

- Original image size: 10328 × 7760 px

- Content: a printed edition (carved-block, square-script). Includes the cover, outer title, head titles and body text of each fascicle, end-of-fascicle phonetic glosses (onshaku), colophons, and ownership stamps.

The IIIF Image API is at level 2 compliance, so a sized JPEG can be requested with /full/{w},/0/default.jpg.

The cover bears a manuscript inscription in black ink (“十五函之三”, a chest/crate identifier) and a printed title slip with the work’s name and the volume range.

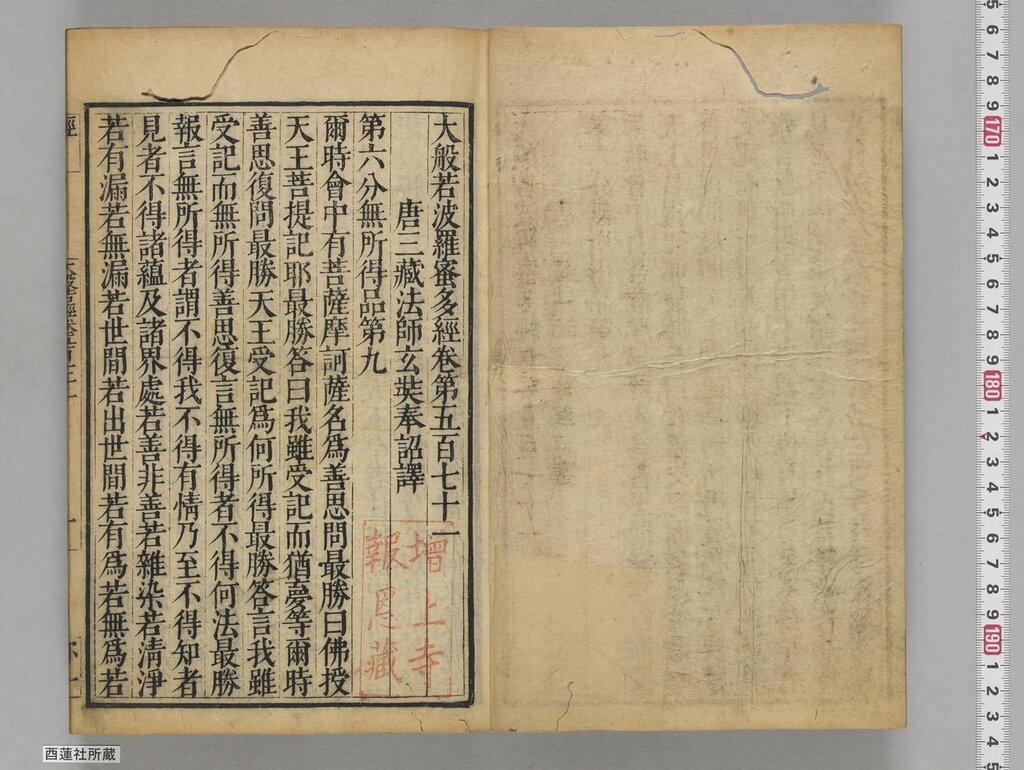

The front page captures, alongside the sūtra title / translator credit / chapter title: the red seal “增上寺報恩藏” (indicating the prior owner), a shelf label at the bottom-left reading “酉蓮社所蔵” (current owner), a color chart at the top-right, and a centimeter ruler — all in the same image.

Method

For NDL, I used the existing TEI/XML output (015-03.xml) supplied with the resource. This XML combines NDL Koten OCR-Lite’s line detection (<lb> elements and the <zone> coordinates inside <facsimile>) with structural information and character corrections added by hand on the data-provider side during proofreading. The type="cover" (annotation), "line", "fascicle", "chapter" and similar attributes on <lb>, and the unification to Kangxi-style glyphs mentioned earlier, were all assigned/applied by the human proofreader — not generated by NDL Koten OCR-Lite itself.

For Vision API, I fetched each IIIF image and ran DOCUMENT_TEXT_DETECTION, taking fullTextAnnotation.text. 105 images fits comfortably inside the 1,000-units/month free tier.

A minimal Python call (using only urllib):

import base64, json, subprocess, urllib.request

token = subprocess.check_output(["gcloud", "auth", "print-access-token"], text=True).strip()

img_b64 = base64.b64encode(open("canvas_002.jpg", "rb").read()).decode()

body = {

"requests": [{

"image": {"content": img_b64},

"features": [{"type": "DOCUMENT_TEXT_DETECTION"}],

"imageContext": {"languageHints": ["ja", "zh"]},

}]

}

req = urllib.request.Request(

"https://vision.googleapis.com/v1/images:annotate",

data=json.dumps(body).encode(),

headers={

"Authorization": f"Bearer {token}",

"Content-Type": "application/json; charset=utf-8",

"x-goog-user-project": "your-project-id",

},

)

with urllib.request.urlopen(req, timeout=120) as r:

result = json.loads(r.read())

print(result["responses"][0]["fullTextAnnotation"]["text"])

I supply languageHints with both ja and zh. Classical Buddhist printed editions benefit from a Chinese-leaning context too.

Images were fetched at /full/2048,/0/default.jpg, which keeps each JPEG at roughly 2–3 MB (well under the 20 MB per-request limit of Vision API).

Observation 1: Phantom kana in NDL output

NDL output included lines that mix hiragana/katakana characters that have no business appearing on a literary-Chinese printed page. Such lines occurred on 12 of the 105 images (11.4%).

A representative selection:

canvas 2 : 一月にて琉球人の神香物語り十一日本宅の宅の國の國の國の

canvas 2 : 七月廿一日の大地震動するにてもありて伊外にて

canvas 2 : 「アウスル」「アースルヤード」「ペロー

canvas 7 : い………………………………一切のふいのマのの

canvas 10 : いの

canvas 13 : めの…一心のやいのマのめ三ッ心のあいのマロの

canvas 30 : 一日にてそのありて其國

canvas 43 : あま

canvas 43 : 犂やし

canvas 64 : 身に

canvas 85 : あま

Looking at the actual canvas 2 image, none of those mixed-kana strings appears anywhere. There is the sūtra title, the body text, the seal, and the shelf label — that is all.

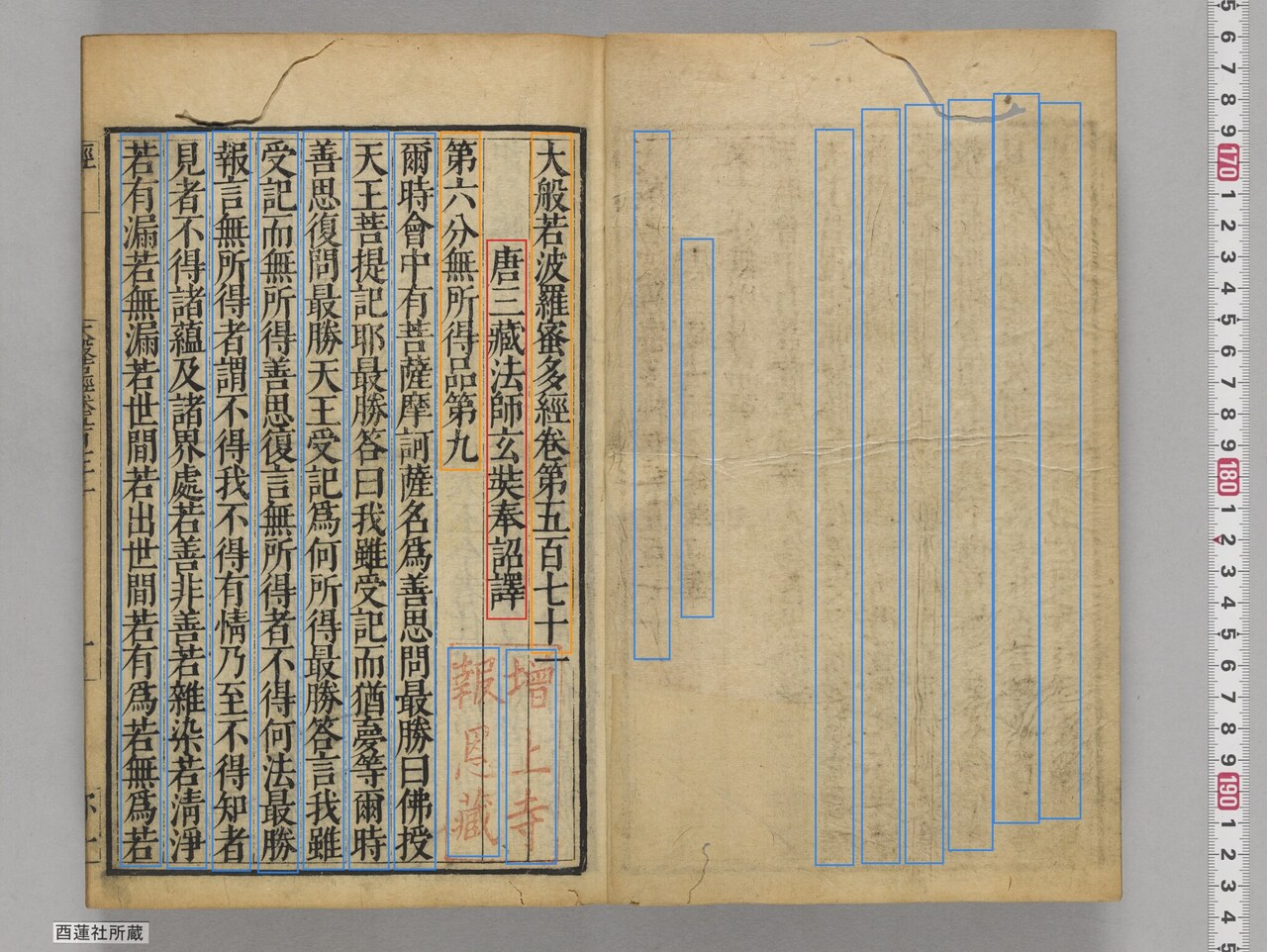

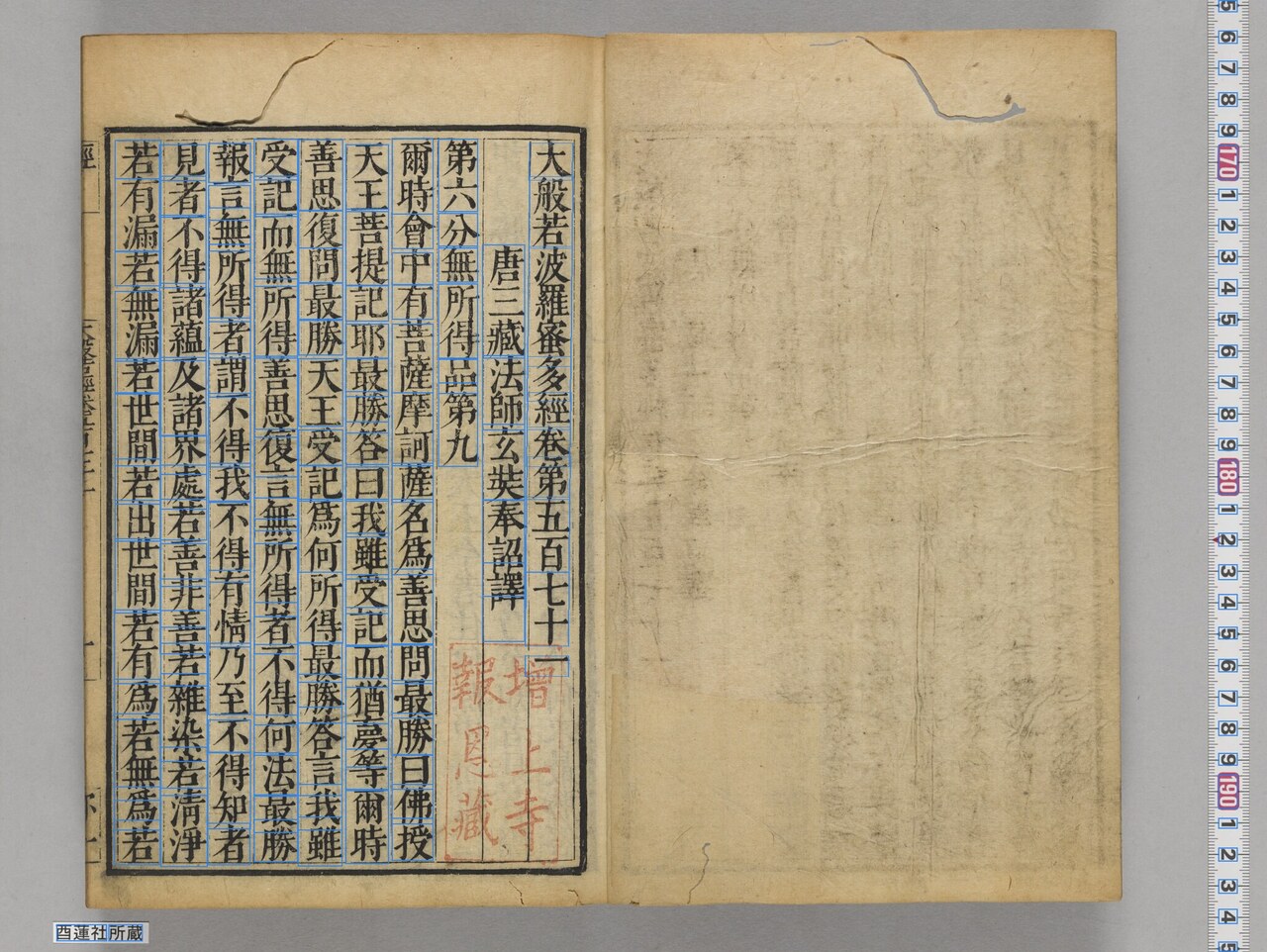

NDL Koten OCR-Lite’s TEI XML includes a <facsimile> section with the bounding-zone coordinates for every line, so we can overlay those rectangles directly onto the source image and see what is happening:

The body text and head title on the right page get reasonable rectangles. But the left page — which is essentially blank yellow paper, save for some faint print-rule lines bleeding through — also gets nine column-shaped rectangles in the same style as real body lines. NDL then runs character recognition on those rectangles and produces the strings like “一月にて琉球人の神香物語り…” that we see in the output. In the XML, the proofreader has tagged each as <lb type="line">, indistinguishable from real body lines in the structure (note that the type="line" label itself was added by hand, not by NDL OCR).

When the same images go through Vision API, none of these phantom kana “lines” appear in its output.

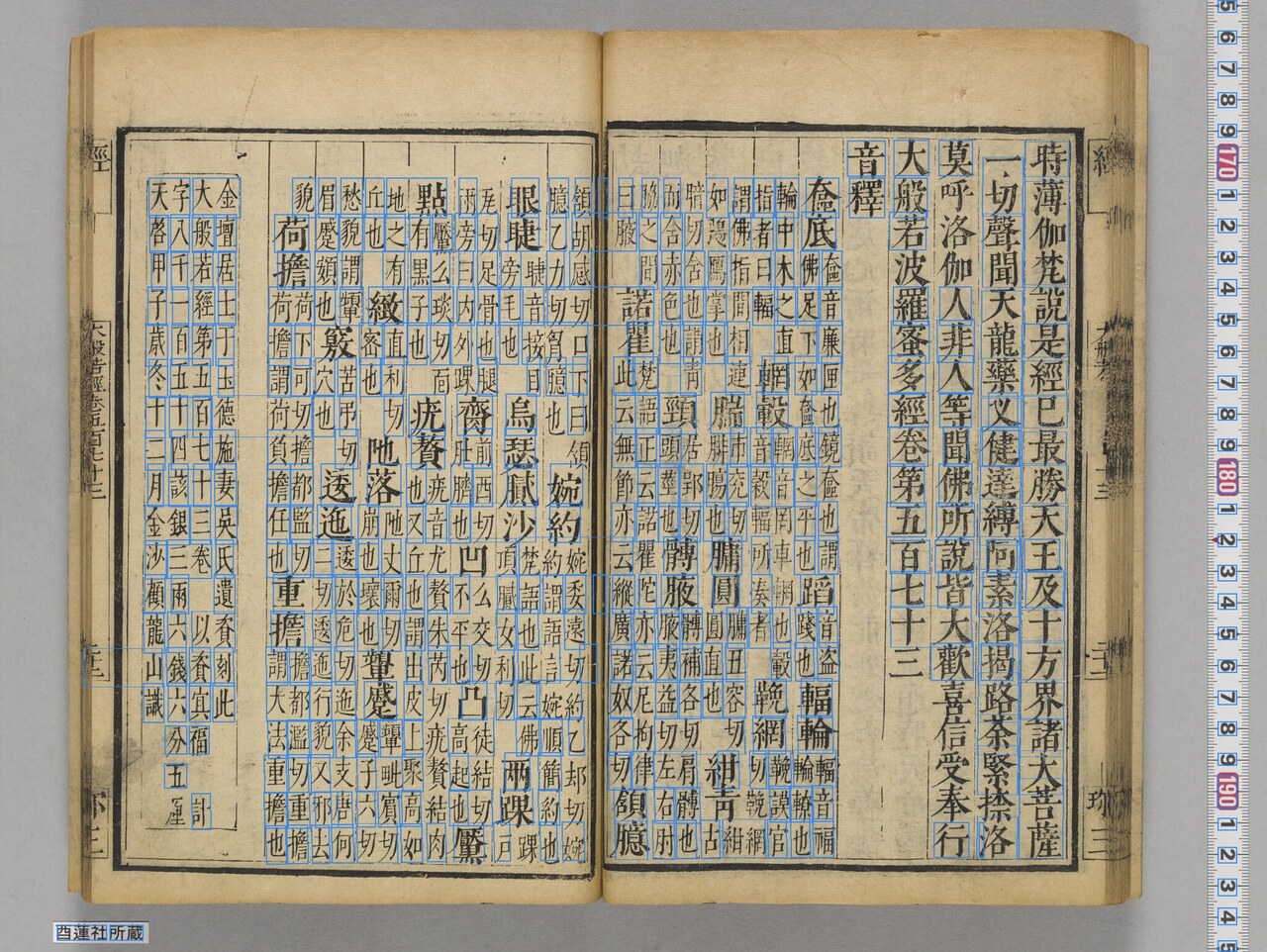

Observation 2: Vision picking up rulers and shelf labels

Conversely, Vision API picks up things NDL ignores: the color chart / centimeter ruler / shelf label / red seal that are physically present in the IIIF capture. This happens on every one of the 105 images.

A snippet of the Vision output for canvas 2 (fullTextAnnotation.text):

5 6 7 8 9 170 1 2 3 4 5 6 7 8 9 180 1 2 3 4 5 6 7 8 9 190 1 2 3 4 5

大般若波羅蜜多經卷第五百七十一

唐三藏法師玄奘奉詔譯

第六分無所得品第九

爾時會中有菩薩摩訶薩名為善思問最勝日佛授

天王菩提記耶最勝答日我雖受記而猶鼻等爾時

...

西蓮社所蔵

The leading 5 6 7 8 9 170 1 2 3 4 5... is the centimeter ruler at the right edge of the image. The trailing 西蓮社所蔵 is the shelf label at the bottom-left. Vision delivers both of these in the same text field, concatenated with body lines.

Note that the correct reading of the label is 「酉蓮社所蔵」 (酉, yū) — Vision misreads the first character as 西. 酉 and 西 are visually almost identical (the only difference is whether there is a horizontal stroke through the middle), and they are a classic confusion pair for general-purpose OCR. NDL did not extract this label region in this run, so this particular character-substitution does not appear there.

Drawing the bounding boxes makes it directly visible that all of these are detected as word-level units alongside the body text:

Aggregate:

- Total characters classified as noise: ~7,560

- Pages with noise mixed in: 105 / 105

For practical use, downstream filtering by line position (boundingPoly) or by digit ratio is necessary. For this article’s tally, I applied minimal regex-based noise filtering before computing Jaccard.

Observation 3: Glyph preservation

Note: The NDL TEI XML used in this article was provided to us as data after a character-normalization step (substitution list applied in bulk for proofreading purposes). The “NDL keeps Kangxi forms; Vision falls back to modern forms” pattern observed in this section is therefore not a comparison of raw NDL Koten OCR-Lite output against Vision, but a comparison of that already-post-processed final data against Vision API output. Evaluating raw NDL OCR’s own glyph behavior would require comparing against the pre-substitution raw output (which is out of scope for this article).

This resource is set in glyphs close to the Kangxi standard. Comparing the same string across the two outputs shows the following difference of state:

| Character (in body text) | NDL XML (post-substitution) | Cloud Vision API |

|---|---|---|

| 爲 / 為 | 爲 (Kangxi) | 為 (modern Japanese) |

| 曰 / 日 | 曰 | 日 |

| 淸 / 清 | 淸 (older form) | 清 (modern) |

| 黑 / 黒 | 黑 | 黒 |

For example, the opening body line of canvas 2:

NDL : 爾時會中有菩薩摩訶薩名爲善思問最勝曰佛授

Vision : 爾時會中有菩薩摩訶薩名為善思問最勝日佛授

Vision also seems to be influenced by simplified-Chinese training data: the 日 substitution happens consistently even in contexts where 曰 is unambiguously the right reading.

For projects transcribing classical Chinese material, glyph form is part of the data. To feed Vision output (which has been pulled toward modern forms) into TEI or a transcription dataset, you need a downstream glyph-conversion table (為→爲 and so on). The NDL XML used in this article is precisely a state in which that kind of post-processing has already been done — whether NDL OCR’s own output recognizes 爲 vs. 為 is a separate question we did not evaluate here.

Conversely, when the goal is “make the body text easier for a modern Japanese reader to read,” the Vision output in modern glyphs is arguably more readable. It depends on the use case.

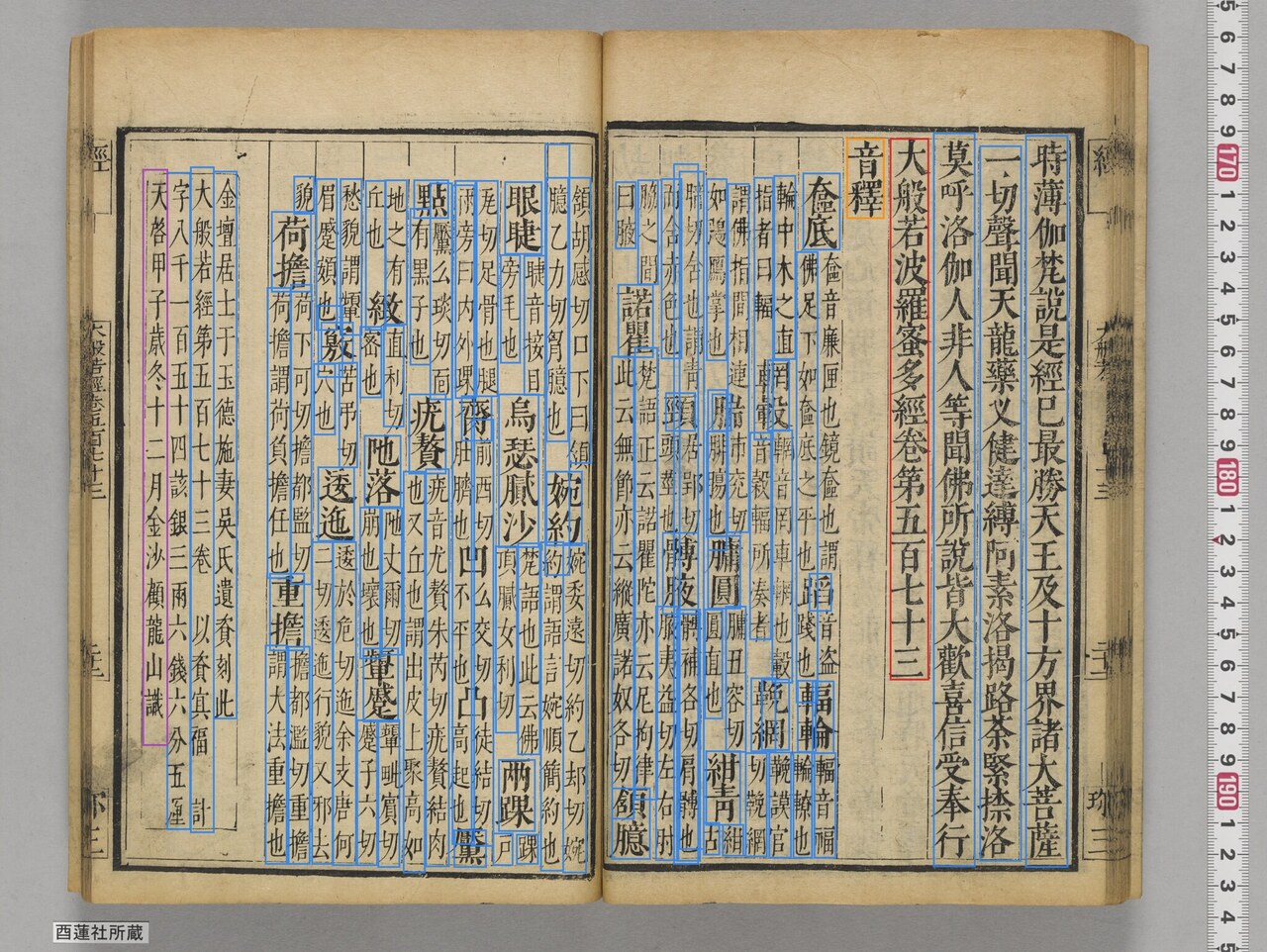

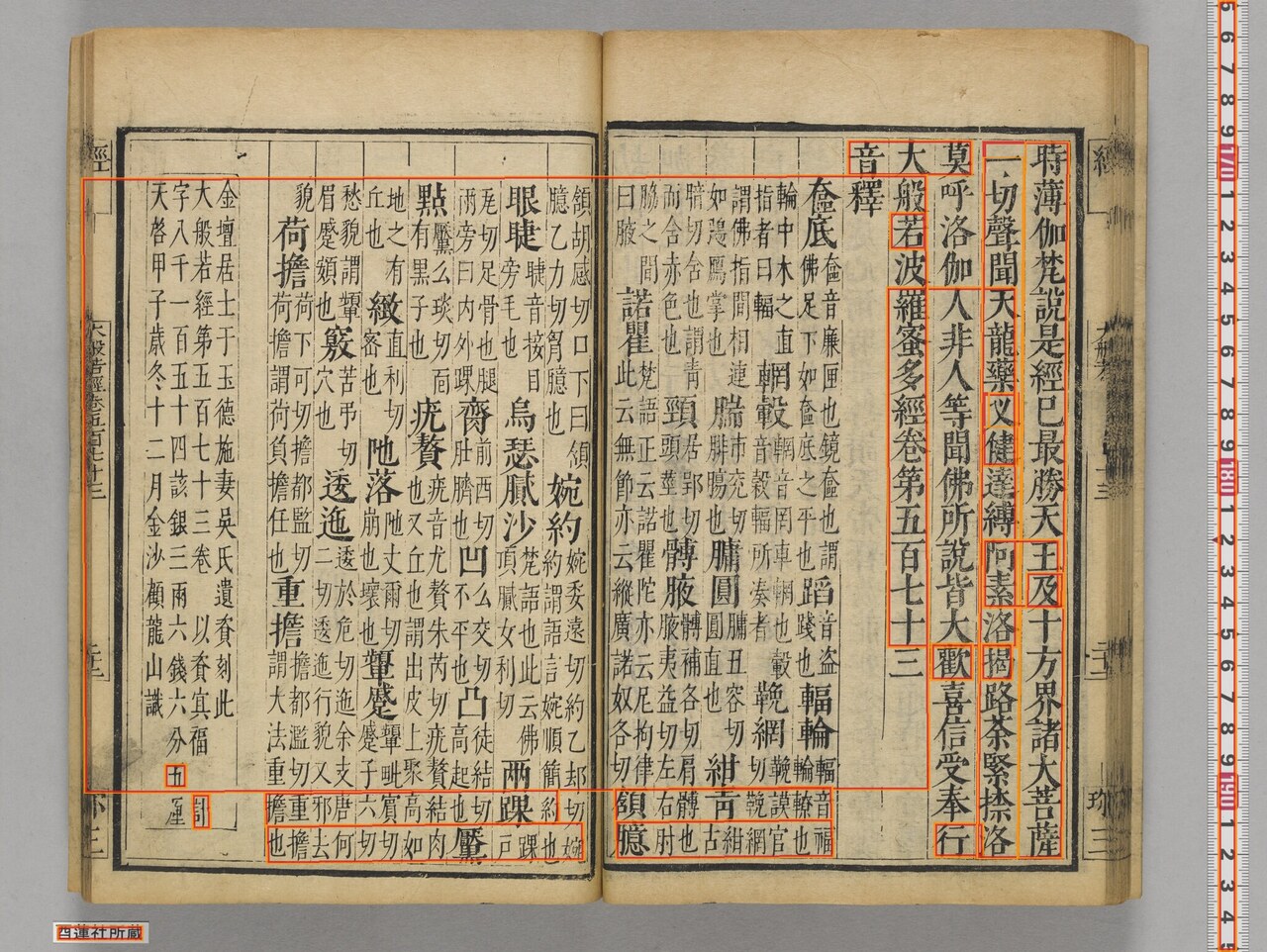

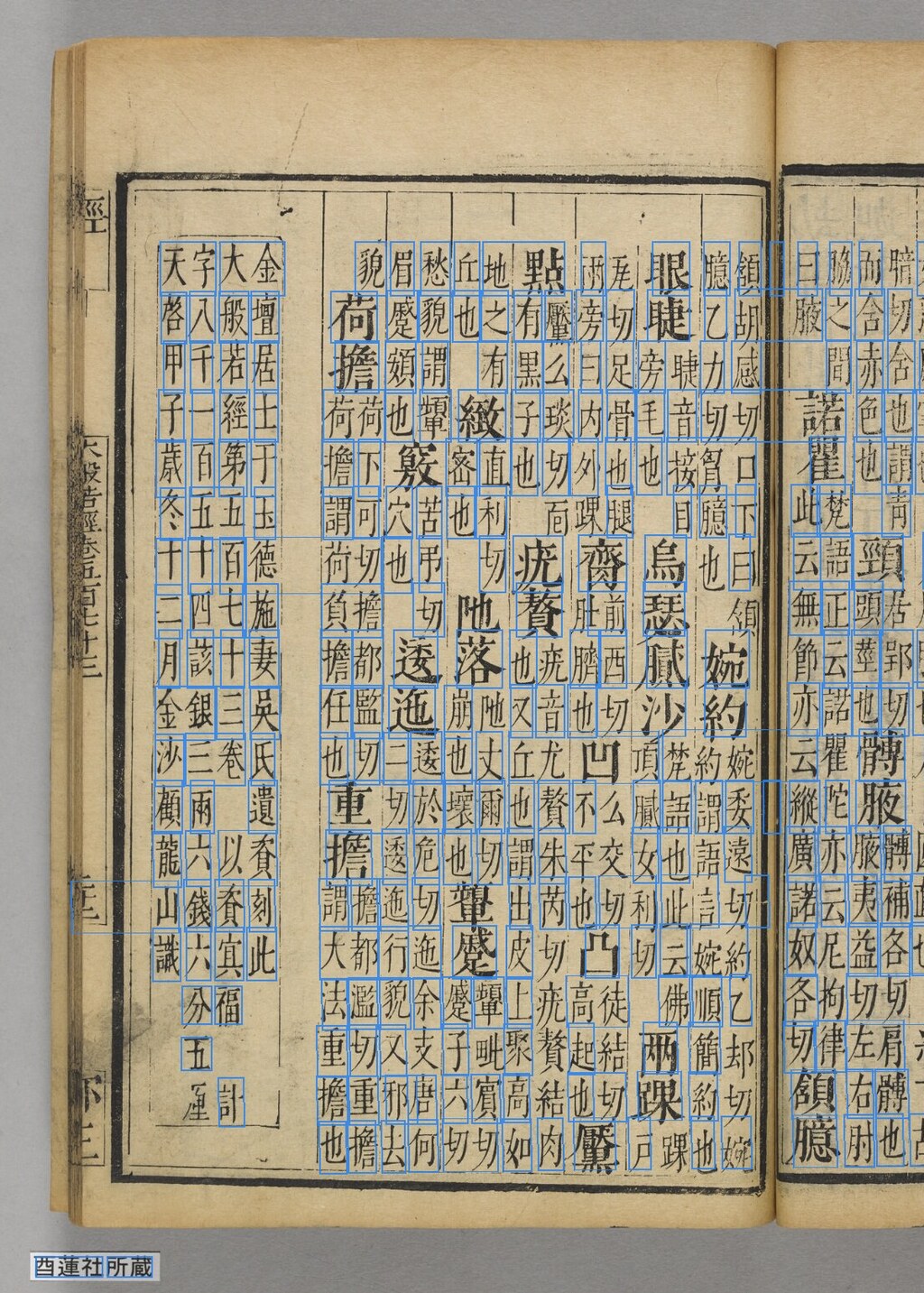

Observation 4: Complex layout (onshaku and warigaki)

At the end of each fascicle is onshaku (音釋) — phonetic glosses that present a difficult character at full size, then annotate its reading and meaning in a two-column run of small characters underneath (a layout convention called warigaki 割書). The result is small text laid out in irregular multi-column grids — one of the hardest layouts for OCR.

Number of output lines for canvas 64:

- NDL: 102

- Vision: 49

NDL’s recognition rectangles

NDL Koten OCR-Lite’s TEI XML carries each line’s zone (ulx, uly, lrx, lry) in the <facsimile> section. Overlaid on canvas 64:

NDL takes vertical-column units for body text and head-character + warigaki cluster units for the onshaku. Treating each warigaki cluster as one unit is a noticeable contrast against Vision (below).

Vision API’s recognition rectangles

Vision API’s fullTextAnnotation is a hierarchy of page → block → paragraph → word → symbol, each with boundingBox.vertices. Overlaying these onto the source image directly shows how Vision is segmenting the page.

Block (red) and paragraph (orange) level:

The right-page sūtra body is captured cleanly as a single big block. The left-page onshaku, with its multi-column small-character layout, is split into many small blocks; the leftmost vertical colophon column is detected as its own block.

Drilling down to word-level rectangles (blue), each small character of the warigaki annotations is detected as its own box:

Closeup of the warigaki:

The detection rectangles themselves are reasonable. The trouble is the reading order when these are flattened into fullTextAnnotation.text. Vision sometimes treats the small annotation characters as text running horizontally next to the head character, so the resulting text snake-lines back and forth between head characters, annotations, and adjacent lines.

NDL (opening of the onshaku, excerpt):

音釋

檢底

轎音福

醫倫

四十

車車

佛足下如檢底之平也卑踐也

輪輪輪

輪中木之直

轎音岡車鯛也穀

...

Vision (same region, excerpt):

音大莫

若义

健

羅人

蜜 非

多 人

經等

...

So the rectangle detection is solid; it is the line-assembly afterwards that breaks down on warigaki-style multi-column vertical layout. NDL has its own character-level recognition errors, but it preserves the layout, so the output is still a workable starting point for human proofreading. For onshaku pages, the more practical approach with Vision is to use the rectangle data directly and rebuild reading order in your own code.

Observation 5: Body-text errors apart from kana hallucination

Setting aside the kana hallucination, both engines have errors in the actual sūtra body. Same canvas 2 body lines:

| Output | |

|---|---|

| Reference (correct classical glyphs) | 爾時會中有菩薩摩訶薩名爲善思問最勝曰佛授 |

| NDL | 爾時會中有菩薩摩訶薩名爲善思問最勝曰佛授 |

| Vision | 爾時會中有菩薩摩訶薩名為善思問最勝日佛授 |

| Output | |

|---|---|

| Reference | 天王菩提記耶最勝答曰我雖受記而猶夢等爾時 |

| NDL | 天王菩提記耶最勝答曰我雖受記而猶夢等爾時 |

| Vision | 天王菩提記耶最勝答日我雖受記而猶鼻等爾時 |

Visually-similar substitutions like 夢 → 鼻 show up in both engines, but somewhat more often on the Vision side.

For the head title (the title line itself), the direction of error reverses:

NDL : 大般若波羅蜜多經卷第五百七十 ← drops the trailing "一"

Vision : 大般若波羅蜜多經卷第五百七十一 ← captured in full

— Vision is sometimes the one that captures the longer string in one shot. Layout analysis and character recognition both depend strongly on per-image position and line spacing.

Aggregate

A rough summary across all 105 images:

| Metric | NDL Koten OCR-Lite | Cloud Vision API |

|---|---|---|

| Total characters extracted | 39,768 | 38,740 (after noise removal) |

| Pages with kana/katakana mixed in (false detections on a literary-Chinese page) | 12 / 105 (11.4%) | 0 / 105 |

| Pages with ruler / shelf-label mixed in | 0 / 105 | 105 / 105 |

| Total noise characters | — | ~7,560 |

| Character-set overlap with reference text (Jaccard, after noise removal) | 0.674 | 0.704 |

The “reference text” used here was kindly supplied as a body-text-only extract from the SAT Daizōkyō Text Database (大正新脩大藏經). Because the SAT body text excludes onshaku, colophons, and shelf labels, the comparison against NDL/Vision (which see those regions in the image) is not symmetric — the OCR side has access to a “wider” input than the reference. Combined with the fact that Jaccard is a coarse character-set metric, the numbers above should be read as a relative comparison of the two engines’ tendencies rather than an absolute accuracy benchmark.

Caveats

- The target here is a printed edition. For manuscripts, cursive script (kuzushiji), and hentaigana, the patterns will likely differ. NDL Koten OCR-Lite is built around Japanese classical material including those genres, so it is likely to be more advantageous on manuscript materials.

- Vision API’s

DOCUMENT_TEXT_DETECTIONis a general-purpose OCR; behavior changes withlanguageHintsconfiguration and with version updates. The numbers here are a single snapshot from April 2026. - The noise-filtering regex used for the aggregate is a minimal effort. In production, position-based filtering using Vision’s

boundingPolywould be more robust. - Because the reference (SAT body text) excludes onshaku, colophons, and shelf labels, the absolute Jaccard values are not symmetric-comparison numbers. Read them as relative NDL-vs-Vision indicators.

- The “NDL hallucination” rows above were tallied mechanically as “lines containing kana on a literary-Chinese page”. NDL Koten OCR-Lite is intentionally designed to capture inscriptions, hentaigana, and so forth, so on materials where such writing actually exists, the same heuristic would produce false positives.

- As noted in Observation 3, the NDL data used here had already passed through a character-normalization (substitution-list) step. The glyph-preservation observations are therefore a comparison of that post-processed final data against Vision API output, not a comparison of raw NDL Koten OCR-Lite output. The “use NDL when you want to preserve glyphs” line in the Practical Takeaways below assumes that this kind of post-processing pipeline is in place.

Practical takeaways

This is only 105 images, so take it as a small-sample observation. For this resource, the practical split came out like this:

- Want to capture complex layouts like onshaku; want to preserve classical-Chinese glyph forms (the latter assumes a post-processing pipeline) → NDL Koten OCR-Lite. Add a downstream filter that rejects “literary-Chinese pages with kana mixed in”, and apply a substitution / proofreading dictionary if you want canonical glyphs.

- Want body text in modern glyphs for read-through; happy to post-process errors with an LLM → Cloud Vision API. Add a noise filter for color charts / shelf labels, etc.

- If you can use both, align outputs at the line level and cross-check. Each engine’s weakness is the other’s strength. With Vision API’s free tier (1,000 units/month for

DOCUMENT_TEXT_DETECTION), an “NDL primary, Vision auxiliary” setup has a low barrier to entry.

To return to the initial point — that there are situations in which Vision can be the stronger choice — the data from this resource is consistent with that observation, in the sense that overall character-set agreement with the reference text was slightly closer for Vision (after noise removal). On the other hand, on complex-layout handling and noise immunity, the NDL side is stronger. (The way the glyph preservation appears here, as noted above, depends on the post-processed state of the data rather than on raw NDL OCR’s own behavior.) The right answer depends on material and use case; for many projects, the answer is “use both.”

Sources

Material side:

- Yūrenja (formerly Zōjōji Hōonzō) Jiaxing Tripitaka Catalog Database: https://u-renja.toyobunko-lab.jp/

- About this site (overview, KAKENHI grants): https://u-renja.toyobunko-lab.jp/about

- IIIF manifest for this resource: https://u-renja.toyobunko-lab.jp/api/iiif/2/015-03/manifest

- Funding: JSPS KAKENHI 18K00073 / 21H04345 / 25H00464

OCR and related tools:

- NDL Koten OCR-Lite: https://github.com/ndl-lab/ndlkotenocr-lite

- Cloud Vision API: https://cloud.google.com/vision/docs/ocr

- Reference text: SAT Daizōkyō Text Database https://21dzk.l.u-tokyo.ac.jp/SAT/