Adding Annotations to Videos Using iiif-prezi3

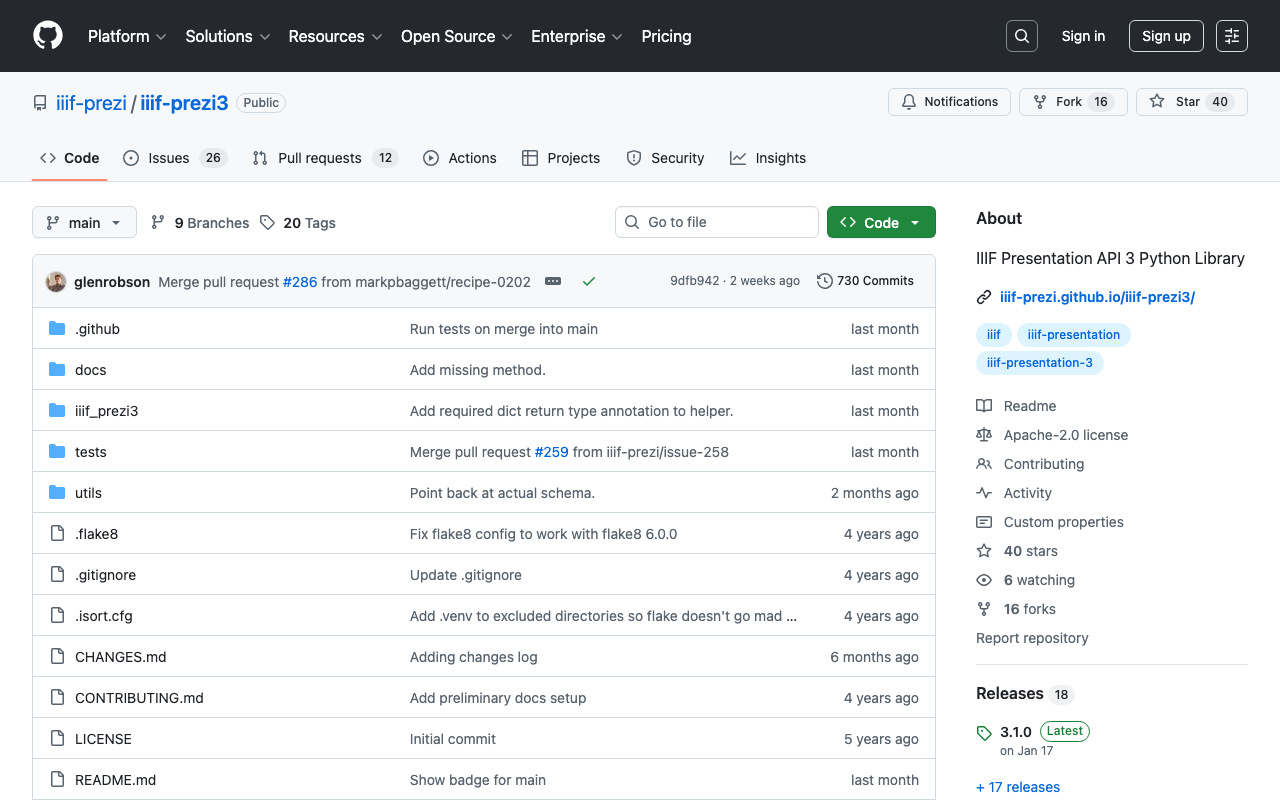

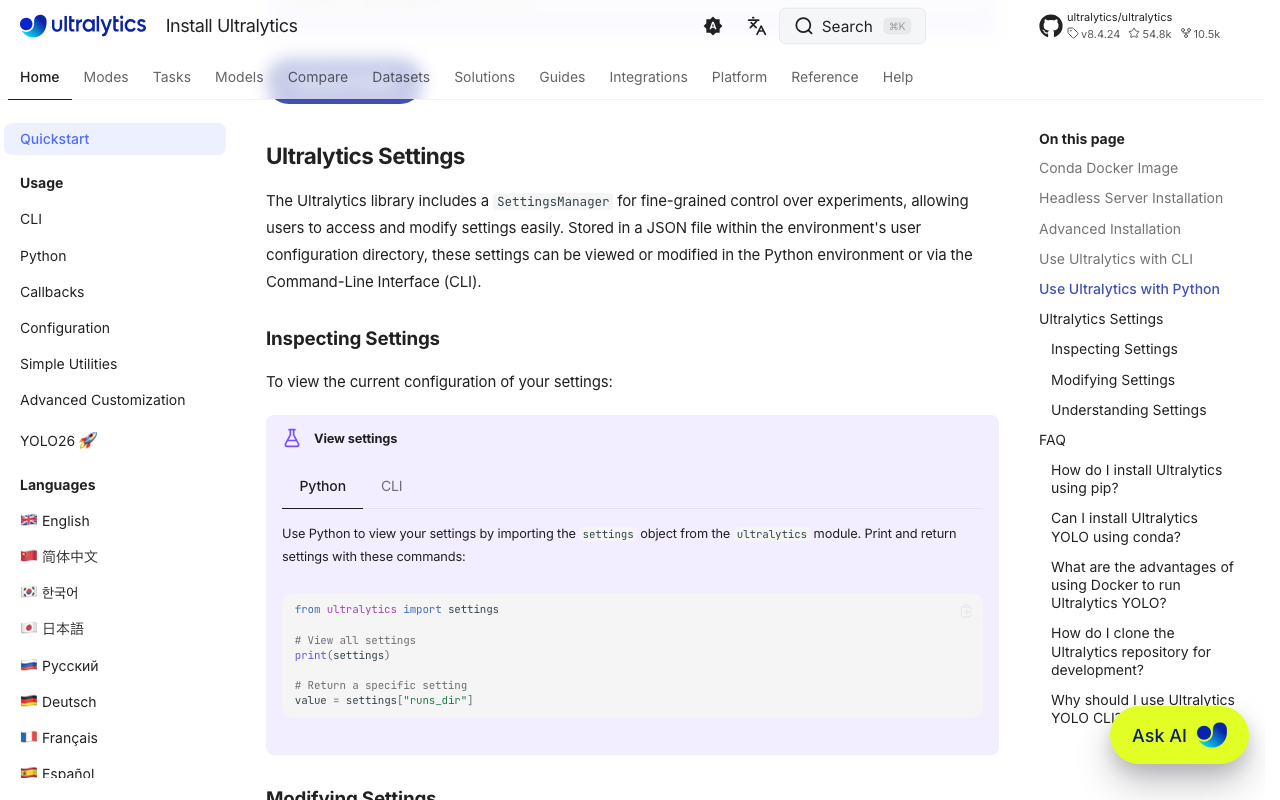

Overview This is a note on how to add annotations to videos using iiif-prezi3. Adding Annotations Amazon Rekognition’s label detection is used. https://docs.aws.amazon.com/rekognition/latest/dg/labels.html?pg=ln&sec=ft Sample code is available at the following link. https://docs.aws.amazon.com/ja_jp/rekognition/latest/dg/labels-detecting-labels-video.html In particular, by setting the aggregation in GetLabelDetection to SEGMENTS, you can obtain StartTimestampMillis and EndTimestampMillis. However, please note the following. When aggregated by SEGMENTS, information about detected instances with bounding boxes is not returned. Data Used The video “Prefectural News Vol. 1” (Nagano Prefectural Library) is used. ...

![[2024 Edition] Building an IIIF Image Server with AWS Serverless Applications](https://storage.googleapis.com/zenn-user-upload/d4b797ffe35d-20240909.png)