Authenticating with ORCID, The Open Science Framework, and GakuNin RDM Using NextAuth.js

Overview This article describes how to perform authentication with ORCID, OSF (The Open Science Framework), and GRDM (GakuNin RDM) using NextAuth.js.

Demo Apps ORCID https://orcid-app.vercel.app/

OSF https://osf-app.vercel.app/

GRDM https://rdm-app.vercel.app/

Repository ORCID https://github.com/nakamura196/orcid_app

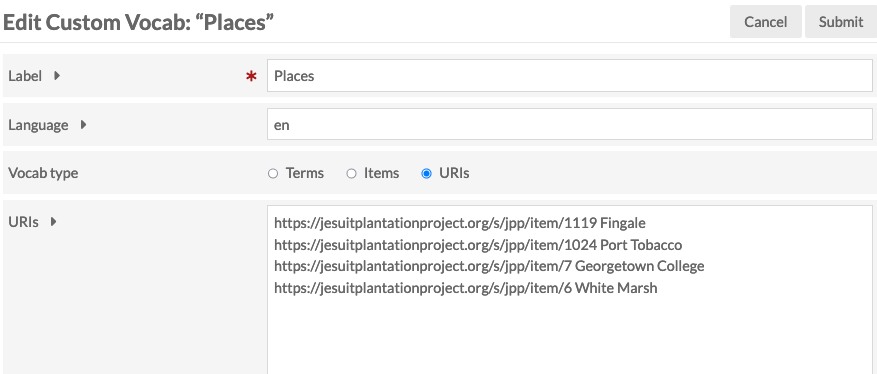

Below is an example of the options configuration.

https://github.com/nakamura196/orcid_app/blob/main/src/app/api/auth/[…nextauth]/authOptions.js

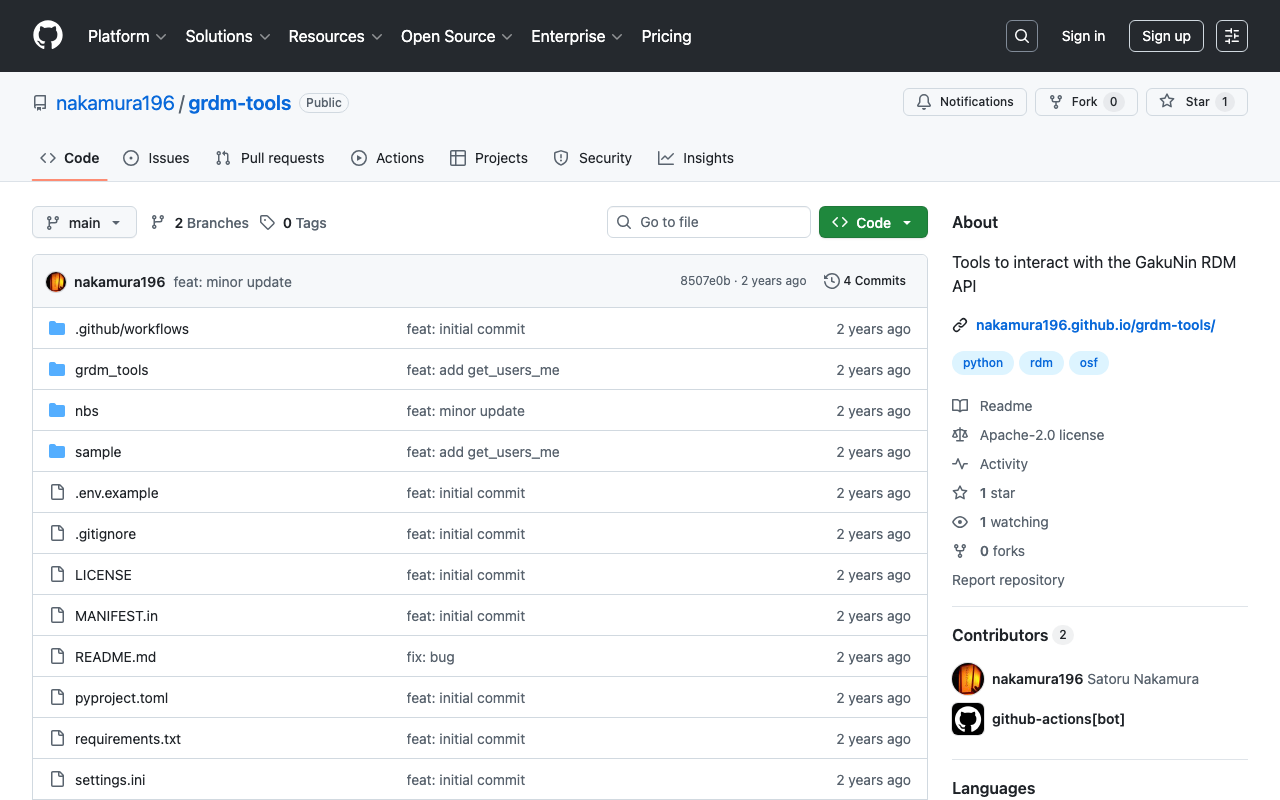

export const authOptions = { providers: [ { id: "orcid", name: "ORCID", type: "oauth", clientId: process.env.ORCID_CLIENT_ID, clientSecret: process.env.ORCID_CLIENT_SECRET, authorization: { url: "https://orcid.org/oauth/authorize", params: { scope: "/authenticate", response_type: "code", redirect_uri: process.env.NEXTAUTH_URL + "/api/auth/callback/orcid", }, }, token: "https://orcid.org/oauth/token", userinfo: { url: "https://pub.orcid.org/v3.0/[ORCID]", async request({ tokens }) { const res = await fetch(`https://pub.orcid.org/v3.0/${tokens.orcid}`, { headers: { Authorization: `Bearer ${tokens.access_token}`, Accept: "application/json", }, }); return await res.json(); }, }, profile(profile) { return { id: profile["orcid-identifier"].path, // Get ORCID ID name: profile.person?.name?.["given-names"]?.value + " " + profile.person?.name?.["family-name"]?.value, email: profile.person?.emails?.email?.[0]?.email, }; }, }, ], callbacks: { async session({ session, token }) { session.accessToken = token.accessToken; session.user.id = token.orcid; // Add ORCID ID to session return session; }, async jwt({ token, account }) { if (account) { token.accessToken = account.access_token; token.orcid = account.orcid; } return token; }, }, }; OSF https://github.com/nakamura196/osf-app

...

November 15, 2024 · Updated: November 15, 2024 · 4 min · Nakamura