Aggregations with Different Keys and Values (Labels and IDs) in Elasticsearch

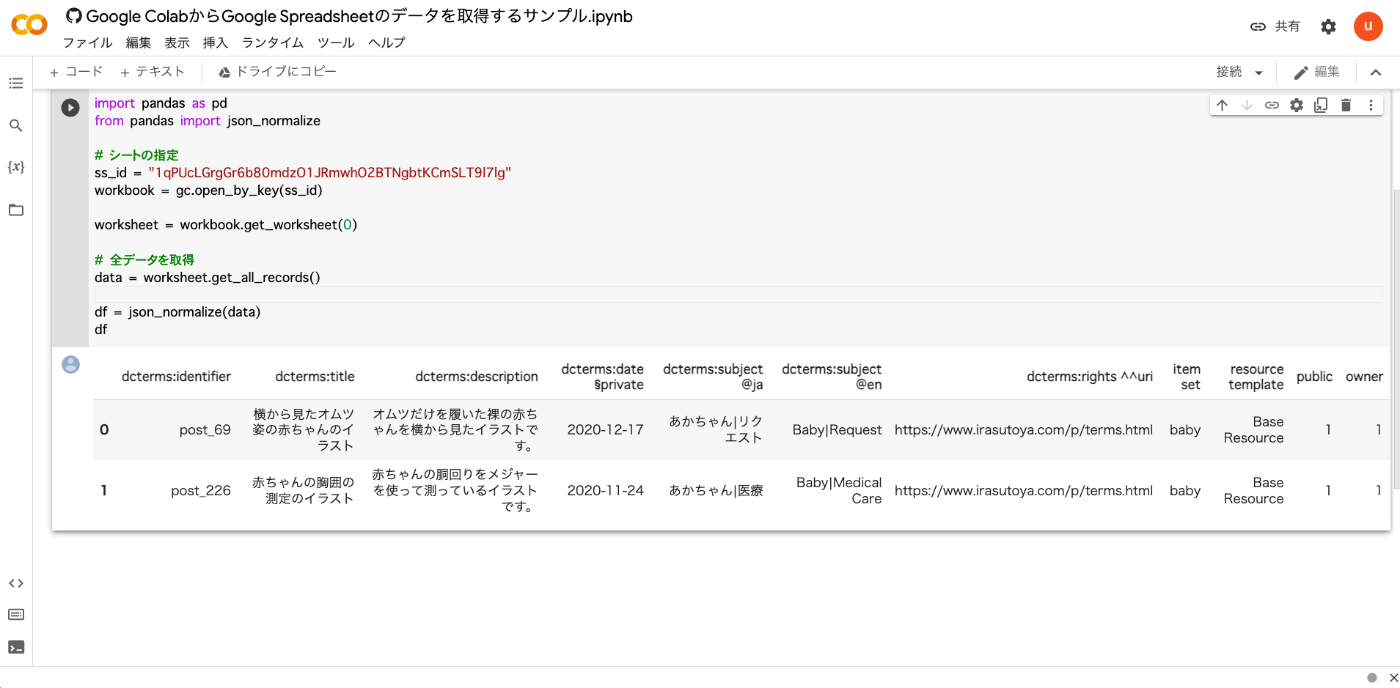

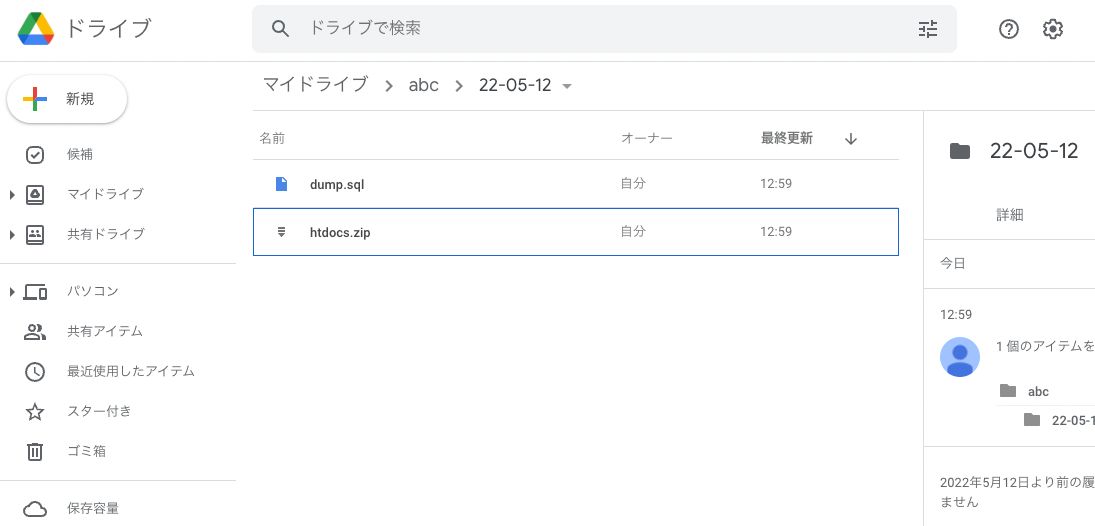

Overview I am currently working on updating the search application for the Cultural Japan project, and I needed to perform aggregation on multilingual data. This article is a memo of the investigation results regarding the methods. Data For the data, we assume a case where the agential (indicating a person) field has values for id, ja, and en. { "agential": [ { "ja": "葛飾北斎", "en": "Katsushika, Hokusai", "id": "chname:葛飾北斎" } ] } For the above data, we want to perform filtering by id while displaying the ja or en value according to the language setting. ...